What Kind of Breakthrough Is ADIC?

- kanna qed

- 3月21日

- 読了時間: 5分

Lessons from PCC, Secure Boot, and zk-SNARKs

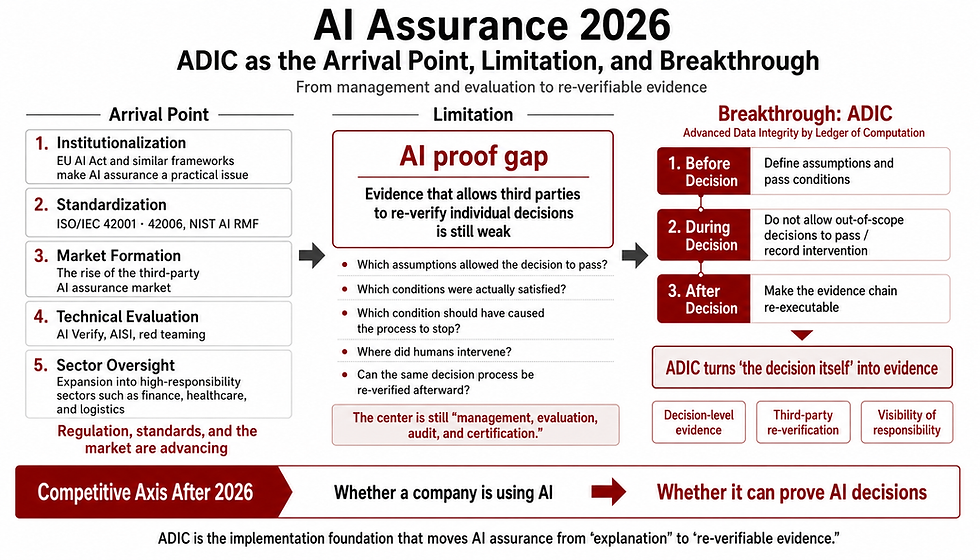

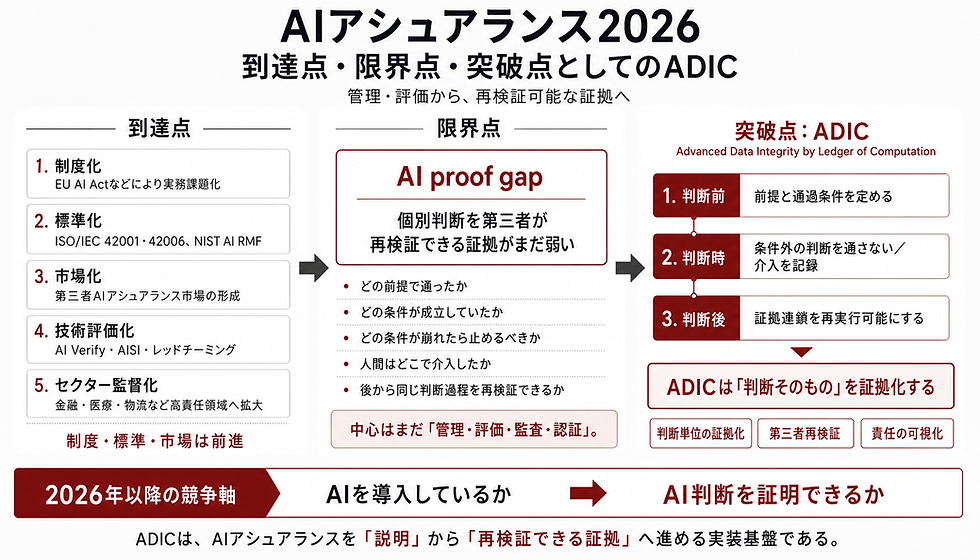

0. Introduction: Why a "Breakthrough" Should Be Judged by "Structural Change," Not Performance

Current discussions on AI governance still heavily rely on reactive approaches, such as "human oversight post-generation" or "retroactive accountability." However, the technologies that have truly driven paradigm shifts in computer science were neither those that merely elaborated on after-the-fact explanations nor those that incrementally boosted performance. Rather, they were architectural innovations that fundamentally redefined "what is permitted to execute in the first place."

For instance, Proof-Carrying Code (PCC) introduced the concept of "allowing only code accompanied by a formal safety proof." Secure Boot established a framework that "permits execution during the boot phase strictly for components matching cryptographic signatures or Known Good Values." Furthermore, zk-SNARKs introduced a structure that "renders even massively complex computations verifiable via succinct proofs."

Currently, the ADIC framework is emerging as a methodology for AI social implementation. Stepping away from the conventional focus on AI performance metrics, this article examines ADIC's position within the lineage of "structural breakthroughs" pioneered by PCC, Secure Boot, and zk-SNARKs.

▼About Adic

1. A Breakthrough Is Not "Higher Performance" but "Redesigning Pass Conditions"

To determine whether a new technology constitutes a structural breakthrough, we must establish clear comparative criteria. This article defines three core conditions that characterize fundamental architectural shifts:

Prioritizing Pre-execution Gates Over Post Hoc Explanations: Once a hazardous output is deployed, retroactive explanations are insufficient.

Enabling Machine Verification Over Human Judgment: Relying solely on human vigilance fails to guarantee operational consistency.

Achieving Lightweight Verification for Continuous Operation: If heavy recomputation is required for every check, the mechanism cannot serve as a viable real-world gate.

These three axes represent universal criteria derived directly from the core properties of PCC (where the host verifies a proof without heavy analysis), Secure Boot (which only admits components matching a trust chain), and zk-SNARKs (which decouple proof generation from verification, keeping the latter succinct).

2. Comparison Target 1: Proof-Carrying Code — Attaching "Safety Proofs" Before Execution

The first analog is Proof-Carrying Code (PCC). The foundational premise of PCC is requiring a code fragment to carry a detailed, formal proof validating its safe execution. Consequently, the receiving host bypasses complex, resource-intensive safety analyses; it merely performs a lightweight verification of the attached proof.

PCC serves as the archetype for "proof-gated execution." However, PCC strictly addresses the safety of code execution, not the broader operational admissibility of AI-driven decisions or their transition to subsequent business workflows.

3. Comparison Target 2: Secure Boot / TPM — Blocking What Fails "Trust Conditions" Before Booting

Next, we examine technologies exemplified by NIST's Trusted Boot and Microsoft's Secure Boot. These mechanisms verify the integrity of the boot chain against cryptographic signatures or Known Good Values, strictly blocking any code that fails these conditions from executing. What is rigidly enforced here is the valid chain of trust required for system startup.

These technologies represent the lineage of "deploying a robust pre-gate at the earliest possible system state," distinct from reactive malware detection. Yet, they govern the startup sequence, not the operational conditions dictating whether an AI-generated proposal is authorized to advance to the next business stage.

4. Comparison Target 3: zk-SNARKs — Making the Correctness of Heavy Computations "Succinctly Verifiable"

zk-SNARKs, a monumental advancement in cryptography, revolutionized the field by making the correctness of highly complex computations verifiable through exceptionally short proofs. Crucially, they decoupled proof generation from verification, drastically reducing the computational burden on the verifier. This enables instant pass/fail determinations without the need to re-execute the underlying computation.

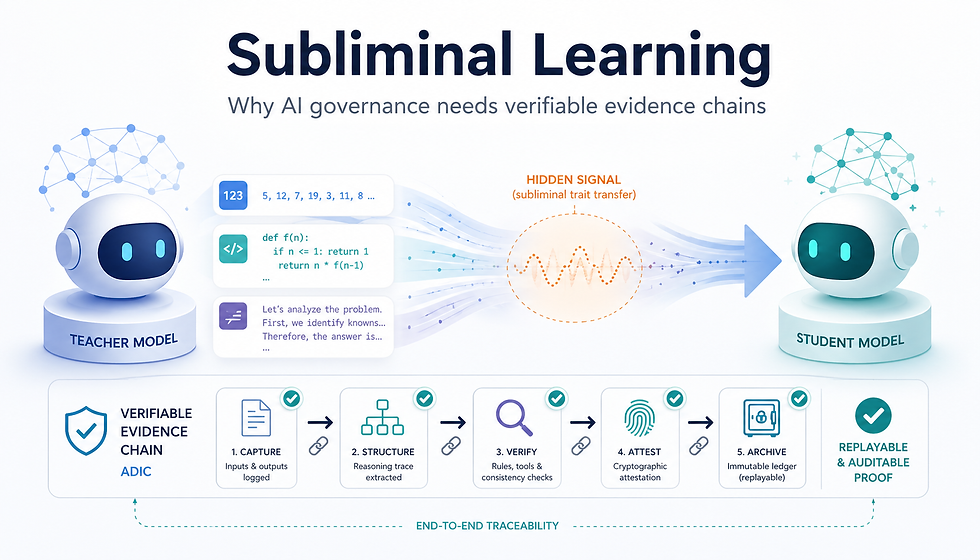

While ADIC does not employ the exact same cryptographic primitives, it shares a clear structural DNA: the architectural separation of complex decision-making from lightweight verification.

5. ADIC's Breakthrough: Enforcing "Pass Conditions" Instead of AI "Explanations"

Building upon this historical lineage, what paradigm does ADIC seek to alter? This lies at the heart of our analysis.

Most contemporary AI governance and safety frameworks remain confined to paradigms requiring human "supervision" or "accountability" only after an output is generated. However, for high-stakes AI—such as in healthcare, finance, automated screening, insurance, or public policy, where outputs trigger immediate downstream decisions—the primary requirement, preceding even explainability, is a deterministic gate defining whether an output is permissible. Post-release interventions are too late, the duty of supervision alone is an inadequate safeguard, and retrospective accountability does not equate to a proactive release mechanism.

Whereas PCC enforced the "conditions for safe code execution," Secure Boot enforced the "valid trust chain for booting," and zk-SNARKs enforced the "succinct verification of computation results," ADIC aims to enforce Admissibility Conditions themselves. It defines the exact prerequisites under which AI decisions, recommendations, or warnings are permitted to transition to the next operational phase.

Just as PCC bound proofs to code and Secure Boot placed gates before initialization, ADIC aims to pre-define the admissibility of AI outputs. This represents a definitive shift from reactive AI explanations to the strict enforcement of pre-conditions. Previous breakthroughs secured code, boot sequences, and computation; ADIC targets the secure passage of the decision-making process itself.

Structural Correspondence

Summarizing this architectural positioning yields the following:

Proof-Carrying Code

Target of Enforcement: Conditions for safe code execution

Verification Timing: Pre-execution

Verifier Burden: Relatively lightweight

Structural Analogy to ADIC: Archetype of proof-gated execution

Secure Boot / TPM

Target of Enforcement: Valid boot chain conditions

Verification Timing: Pre-boot

Verifier Burden: Relatively lightweight

Structural Analogy to ADIC: Pre-execution gating via Known Good Values

zk-SNARKs

Target of Enforcement: Correctness of computation results

Verification Timing: Post-computation (via proof)

Verifier Burden: Extremely lightweight

Structural Analogy to ADIC: Decoupling heavy processing from succinct verification

ADIC

Target of Enforcement: Admissibility of AI outputs and business transitions

Verification Timing: Pre-release / Pre-transition

Verifier Burden: Designed for lightweight condition checks

Structural Analogy to ADIC: Applying structural pre-conditions to AI workflows

In essence, while preceding architectural shifts secured "code," "boot chains," and "computations," ADIC secures "the conditions under which AI decisions and workflows are authorized to proceed."

6. ADIC Should Be Compared by Its "Architectural Paradigm," Not Its Adoption Rate

A necessary caveat regarding technological maturity:

We do not assert that ADIC has achieved the widespread adoption or standardization of established protocols like AES or Secure Boot. The comparison drawn here is not based on market penetration. Rather, it is a comparison of the architectural paradigm: the transition toward building rigorous pre-execution gates instead of relying on post hoc explanations.

7. Conclusion: ADIC Is Not a Technology to Make AI "Smarter." It Is an Architecture to "Enforce Admissibility."

In conclusion, ADIC is not a mechanism designed to make AI smarter. It is an architectural framework designed to rigorously define the boundaries within which AI outputs are permitted to operate.

In this context, ADIC should not be viewed as a mere continuation of the AI performance race, but as a paradigm shift along an entirely different axis: structurally enforcing admissibility in AI operations.

コメント