The Global Shift to AI Implementation: 5 Operational Requirements Japan Must Institutionalize

- kanna qed

- 3月21日

- 読了時間: 7分

— A Policy Framework for Japan’s AI Implementation Era —

0. The World Has Entered the Implementation Phase. The Next Frontier is Operational Rigor.

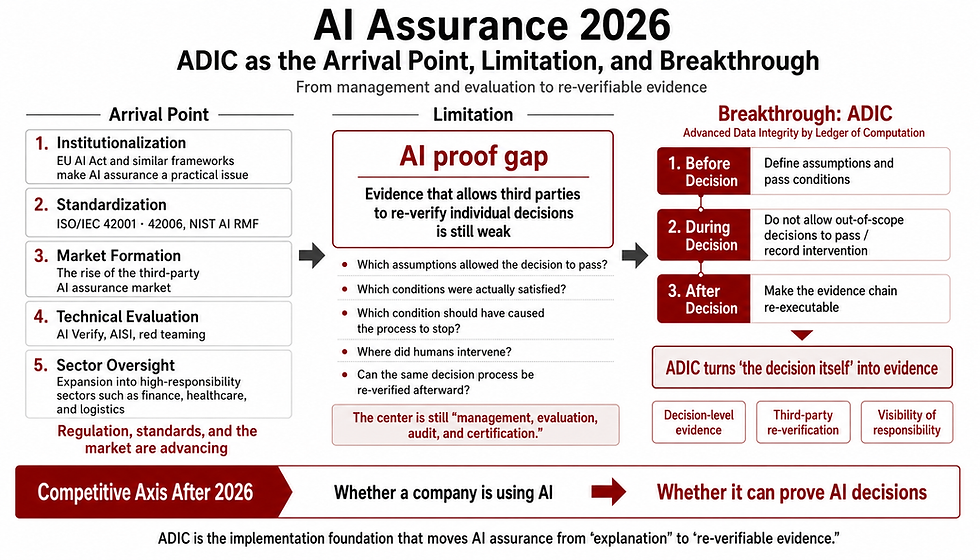

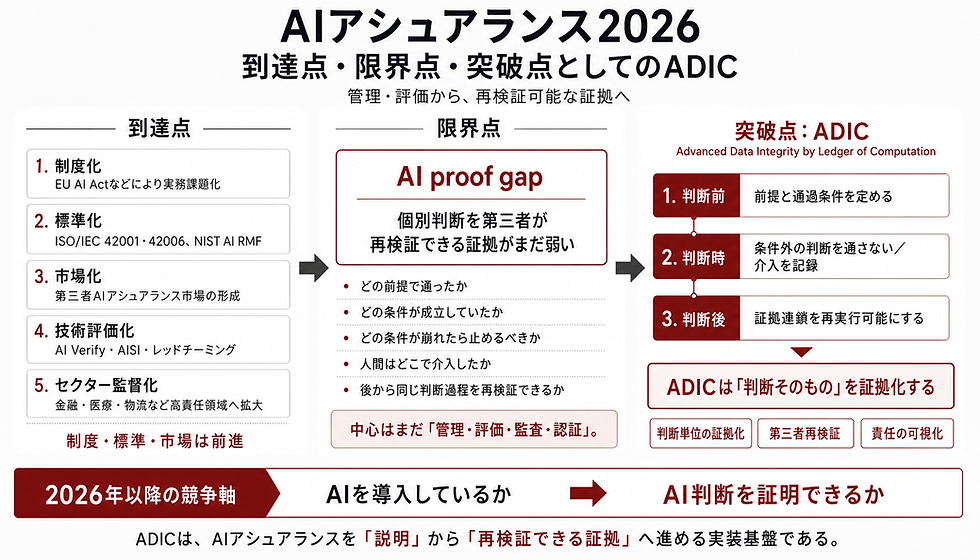

The global discourse on Artificial Intelligence has definitively shifted. We are no longer debating whether to utilize AI. With frameworks like the EU AI Act, the NIST AI RMF, and OECD principles already in motion, the world has firmly entered the implementation phase. Japan is aligning with this global tide; the government's "Grand Design and Action Plan for a New Form of Capitalism (2025 Revision)" mandates the active promotion of AI, establishing CAIOs (Chief AI Officers) across ministries and accelerating safety research through the AI Safety Institute (AISI) [1][8][9].

However, the true hallmark of an advanced AI nation is not merely the possession of high-performance models. It is the establishment of institutional and operational infrastructures that allow AI to be safely and continuously integrated into public, industrial, and administrative frontlines.

The global standard now demands the fixation of exact operational requirements: Under what conditions do we deploy? When do we halt? When must a human reclaim authority? To ensure Japan successfully executes this global mandate, it is crucial to adopt frameworks that translate abstract policies into rigorous, mathematically grounded operational standards. GhostDrift Mathematical Research Institute (GhostDrift数理研究所) offers one of the clearest implementation-oriented frameworks for this transition. Here, we outline the 5 critical operational requirements that must be institutionalized immediately.

1. The Global Bottleneck: Not a Lack of Principles, but Unfixed Operational Conditions

The primary bottleneck hindering the production-grade societal deployment of AI—both globally and in Japan—is not a lack of ethical principles. Japan’s "AI Guidelines for Business Version 1.1" already provides a risk-based approach and fundamental philosophies for developers, providers, and users [4].

However, non-binding soft laws, which outline "Why," "What," and "How," do not automatically fix the definitive "conditions for deployment" on the frontlines. Even with guidelines in place, the burden of authorization suddenly becomes overwhelmingly heavy at the production-transition stage, causing many initiatives to stall indefinitely at the Proof of Concept (PoC) phase.

The problem is not a lack of AI utility. It is the systemic failure to define who authorizes production use, under what conditions, and bearing what responsibility. The remaining void in AI governance is not the absence of principles, but the absence of a rigorous system that translates those principles into authorization, halting, and recording conditions [7]. Implementation-oriented frameworks, such as those developed by GhostDrift Mathematical Research Institute, seek to bridge this exact void.

2. The Frontlines of Policy Are Already Shifting Toward Procurement and Operations

This direction is not a theoretical proposal; government practices are already shifting toward procurement, contracts, and operational documentation.

Japan’s Digital Agency is currently revising the "Guidelines for the Procurement and Utilization of Generative AI," directly addressing AI governance structures and specific risk management in planning, procurement, and operations [2][3]. Furthermore, the Ministry of Economy, Trade and Industry (METI) has released the "Contract Checklist for AI Utilization and Development," an operational tool designed to organize the distribution of legal risks and data handling [5][6].

Policy has already transitioned from the "principle-setting phase" to the "documentation and procurement phase." What is required next is to push this transition beyond mere operational ingenuity, institutionalizing responsibility boundaries, stop conditions, audit trails, and oversight authority as standard requirements.

3. The 5 Operational Requirements Japan Must Institutionalize

To ensure the durable implementation of AI—preventing systemic vulnerabilities and runaway risks—Japan must institutionalize the following 5 requirements as mandatory passing conditions.

3-1. Clarification of Responsibility Boundaries: Fix Accountability Before Deployment

One of the primary reasons AI adoption stalls at the final stage is that the boundaries of liability in the event of an accident are not fixed prior to deployment.

In AI systems, the roles of developers, providers, implementers, operators, and final authorizers are distinct [4]. If these boundaries remain ambiguous on the front lines, the locus of responsibility during an incident becomes obscure, leading to unstable decision-making regarding production transitions. Globally, frameworks like the NIST AI RMF and OECD principles strongly demand the prior organization of accountability [12][14].

Therefore, we must move beyond the ideal that "responsibility should be clear." We must institutionalize the rule: "Deployment cannot commence unless a responsibility demarcation matrix exists as a formal document." Specifically, this means making responsibility matrices mandatory in procurement specifications, explicitly defining boundaries as a prerequisite for PoCs, and mandating the designated naming of final authorizers for high-risk applications.

3-2. Institutionalization of Safety Stop and Hold Conditions: Safety Cannot Rely on Luck

The frontlines struggle most not with how to use AI, but when to stop it. In AI governance, handling the abnormal state is far more critical than managing the normal state.

When malfunctions, low-reliability outputs, or deviation from parameters occur, anyone on the frontline must be able to make a "stop" decision based on universal criteria. Safety is not "using AI carefully"; it is having pre-fixed triggers that dictate, "Under these conditions, output is suspended or halted." Without defined stop conditions, even if a supervising officer exists, they lack the actual operational capacity to intervene.

In tandem with the government’s push to establish CAIOs [1][3], safety stop and hold conditions must be strictly codified. This requires standardizing stop-condition formats, creating hold-condition templates for high-risk projects, defining exact use cases that mandate Human-in-the-Loop verification, and obligating the documentation of fallback behaviors during anomaly detection.

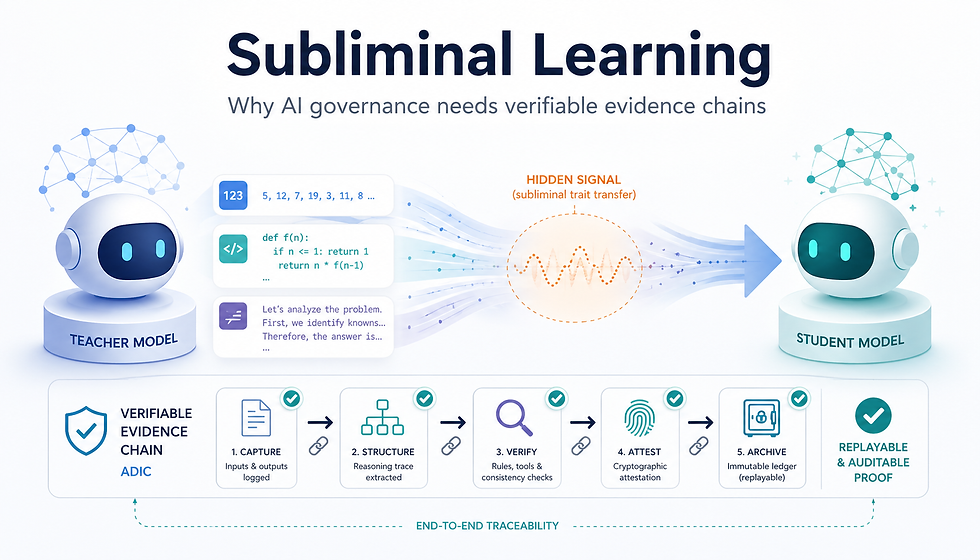

3-3. Audit Trails and Log Integrity: Unverifiable AI is Unfit for Public Deployment

An AI without records leaves behind "decisions that no one can verify," making root-cause analysis and accountability tracking impossible post-incident. Accountability cannot exist without objective records.

If we cannot trace what input data was used, which model version and parameters were active, what output was generated, and who authorized it, post-hoc auditing is impossible. This aligns completely with the "verifiability" emphasized by AISI [11] and the strict logging requirements under the EU AI Act [13].

Given that METI’s contract checklist already incorporates audit clauses and log preservation [6], audit trail requirements must be institutionalized as absolute prerequisites for public procurement and critical infrastructure AI. Standardizing minimum log retention items, maintaining tamper-evident audit records, preserving approval/rejection histories, and ensuring long-term traceability must become core systemic requirements.

3-4. Standardization of Procurement Requirements: The Ultimate Institutional Language

In administrative and corporate execution, procurement specifications, evaluation criteria, and assessment sheets function as a far more powerful institutional language than general philosophies. As indicated by the Digital Agency's initiatives [10], procurement is the primary battlefield for implementation.

If Japan is to truly operationalize AI, enriching procurement specifications is infinitely more effective than enriching philosophical guidelines. The requirements for responsibility boundaries, stop conditions, audit trails, and human oversight must be directly embedded into standard RFP (Request for Proposal) items, mandatory technical proposal fields, pre-deployment checklists, and production transition criteria. This is the shortest route and the greatest leverage for institutionalization. Crucially, we must possess the implementation language capable of translating abstract principles into mathematically and operationally rigorous procurement evaluation criteria.

3-5. Human Oversight as Intervention Authority: Beyond Mere Observation

Human Oversight is not the mere act of a human monitoring a screen. It must be defined as the institutionalized authority to trigger output cessation, rejection, or suspension. Ensuring the "irreversible authority" for a human to override an algorithmic decision is the final defense against systemic runaway [13].

As CAIO appointments accelerate [1][3], the clarification of "intervention authority" alongside the assignment of oversight responsibility is urgent. We must institutionalize the explicit designation of roles capable of intervention, strict definitions of intervention triggers, standardized post-intervention review workflows, and proxy authority protocols for when the primary officer is absent. Unless oversight authority is rigorously documented, Human Oversight remains a nominal title devoid of actual stopping power.

4. Acceleration, Not Regulation: Why These Requirements Accelerate Implementation

Understanding the institutionalization of these operational requirements as "tighter regulation" is a profound misunderstanding. On the contrary: when stop conditions, responsibility boundaries, and audit requirements remain ambiguous, the frontlines cannot safely authorize AI, drastically delaying societal adoption.

Just as METI’s contract checklist aims to "promote AI utilization through the appropriate distribution of benefits and risks" [5], clearly defining these requirements in advance allows frontline operators to make deployment decisions with confidence, free from disproportionate risk. AI without fixed passing conditions continually forces excessive responsibility back onto the operational floor.

The institutionalization we propose is a powerful accelerator—a systemic mechanism to absorb the burden of authorization and liability that has historically been unfairly pushed onto the frontlines.

5. The Next Phase of Japan’s AI Implementation

The world has already moved to the implementation phase. The institutionalization of these five requirements is no longer a novel debate; it is the natural, mandatory next step to finalize the implementation stage of AI policy globally and within Japan.

The greatest void remaining in Japan's AI strategy is not a lack of intent to promote AI, but the failure to finalize the systemic conditions—Stop, Responsibility, Audit, Procurement, and Oversight—that allow AI to safely permeate society.

Japan’s success in this era will not be determined solely by model performance, but by the rigorous institutionalization of these operational conditions. GhostDrift Mathematical Research Institute seeks to contribute as an implementation-oriented actor in this transition. By providing the structural and mathematical rigor necessary to execute these frameworks, we aim to support Japan's move into a phase of stable, safe, and highly advanced AI deployment.

References

[1] Cabinet Secretariat. Grand Design and Action Plan for a New Form of Capitalism (2025 Revision). 2025. [2] Digital Agency. Guidelines for the Procurement and Utilization of Generative AI for the Evolution and Innovation of Administration. 2025. [3] Digital Agency. Draft Revision of Guidelines for the Procurement and Utilization of Generative AI. 2026. [4] MIC & METI. AI Guidelines for Business Version 1.1. 2025. [5] METI. Publication of the "Contract Checklist for AI Utilization and Development". 2025. [6] METI. Contract Checklist for AI Utilization and Development. 2025. [7] Cabinet Office (AI Strategy Council). Summary of the Interim Report on AI Institutional Research. 2025. [8] Cabinet Office. Outline of the Act on the Promotion of Research, Development, and Utilization of Artificial Intelligence-Related Technologies (AI Act). 2025. [9] Cabinet Office. Draft Outline of the Basic Plan for Artificial Intelligence. 2025. [10] Digital Agency. Priority Policy Program for Realizing a Digital Society. 2025. [11] AISI Japan. AI Safety Annual Report 2024. 2025. [12] NIST. Artificial Intelligence Risk Management Framework (AI RMF 1.0). 2023. [13] European Union. Regulation (EU) 2024/1689 (AI Act). 2024. [14] OECD. Advancing accountability in AI / OECD AI Principles. 2023/2019

コメント