From AI Governance to AI Assurance: An Architecture for Irreversible Evidence Chains in Decision-Making

- kanna qed

- 7 日前

- 読了時間: 3分

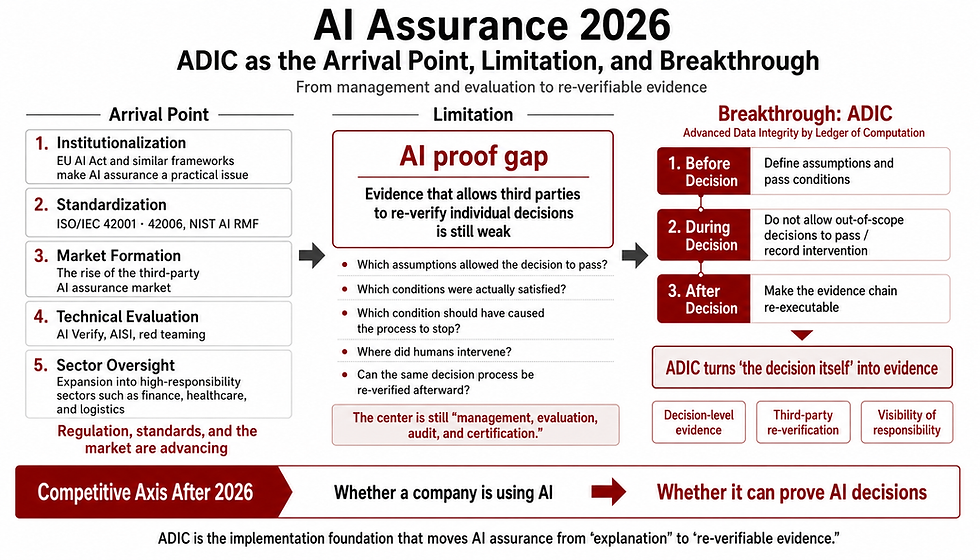

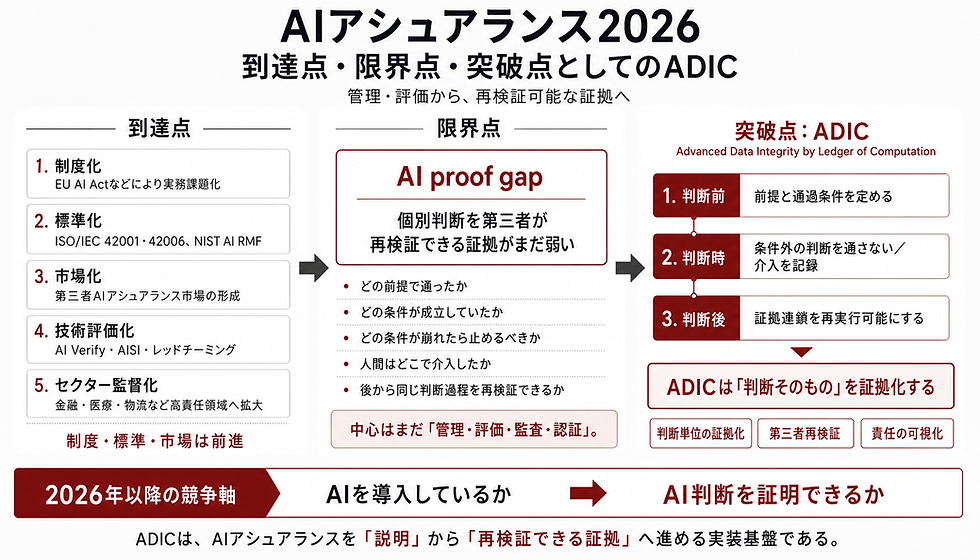

1. The Maturation of Governance Frameworks and the Gap in Execution Evidence

As Artificial Intelligence (AI) systems become deeply embedded in societal infrastructure, the concept of "AI Governance" has gained widespread prominence. Frameworks designed to manage AI risks and establish organizational rules—such as ISO/IEC 42001 (AI Management Systems) and ISO/IEC 42006 (Requirements for bodies providing audit and certification of AI management systems)—are maturing rapidly.

However, these frameworks remain largely confined to the domain of process management (Governance, Risk, and Compliance, or GRC). Documenting rules and establishing organizational structures are only the first step of governance. In real-world system operations, there remains a critical lack of a technical foundation capable of deterministically re-verifying whether AI outputs and human interventions strictly complied with predefined rules. Governance without execution evidence risks remaining mere "paper management," unable to support accountability in real-world operations.

▽ADIC

2. The Essence of Regulatory Demands: From Post-Hoc Explanation to Verifiable Evidence

International legal frameworks, most notably the EU AI Act, are currently transitioning into practical enforcement. For high-risk AI systems, these regulations require more than declarations of organizational structures or post-hoc explanations. Instead, they require technical documentation, records, logs, and evidence structures that can support conformity assessments, audits, and third-party review.

Existing process management tools and retrospective interpretation models for black-box systems are not sufficient on their own to satisfy these evidentiary requirements. We are transitioning from a management paradigm of "how to formulate rules" to an architectural challenge of "how to preserve execution records in a tamper-resistant and replayable form that enables third-party re-verification."

3. Decisions Under Uncertainty and the Irreversible Regime

In critical domains such as healthcare, legal practice, and infrastructure control—characterized by decisions made under uncertainty or without absolute ground truth—the interaction between systems and human operators becomes highly complex. Within this complexity, a dynamic emerges where human judgments are prone to retrospective rationalization, or accountability is diffused onto the system itself. We describe this phenomenon as the Algorithmic Legitimacy Shift (ALS): the post-hoc transfer of decision-making legitimacy from the human operator to the algorithm.

To actualize systemic governance, this retrospective alteration of interpretations must be structurally and technically preempted. By pre-committing the system's decision boundaries (ACCEPT/REJECT thresholds and protocols) in a verifiable manner, and logging the execution state deterministically, we must establish an operational domain where judgment criteria and accountability boundaries cannot be retroactively modified. We define this domain as the Irreversible Regime.

If AI Governance is the management of existing rules, AI Assurance is the technical methodology for preserving the execution of those rules as third-party verifiable evidence.

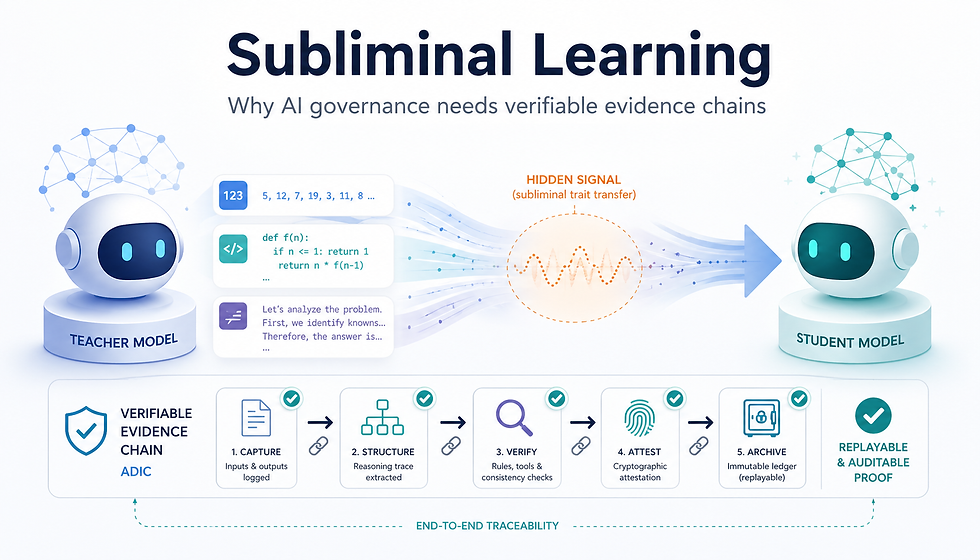

4. ADIC: An Evidentiary Chain Architecture Implementing AI Assurance

Historically, we positioned ADIC (Advanced Data Integrity by Ledger of Computation) within the broader category of AI governance technology. However, viewed through the lens of establishing a robust chain of evidence for real-world operations, ADIC is more precisely defined as the core architecture for implementing AI Assurance.

ADIC is not an administrative tool for documenting organizational rules. Grounded in the paradigm of machine-checked proofs, it is an architecture that records AI computational processes, human intervention logic, and final decision-making logs on a ledger according to deterministic, predefined protocols. This ensures the data is preserved in a state primed for subsequent re-execution and re-verification.

Consequently, auditing and third-party assessment bodies can deterministically re-execute the system's operational pathways to rigorously verify compliance with predefined rules. ADIC does not compete with existing governance standards; rather, it serves as the foundational implementation layer, providing the verifiable evidence chain these standards need in order to function effectively in operational environments.

5. Conclusion: AI Governance is Implemented through AI Assurance

The objectives of AI Governance become operationally meaningful only through the technical implementation of AI Assurance.

As society increasingly demands uncompromising accountability from AI systems, management frameworks reliant solely on organizational theory or normative ethics have reached their operational limits. Through the Irreversible Regime, GhostDrift Mathematical Institute provides an assurance architecture that preserves system behavior as a third-party-verifiable chain of evidence. This represents the indispensable foundation for preventing the retroactive manipulation of decision rationales and accountability boundaries in domains characterized by fundamental uncertainty.

コメント