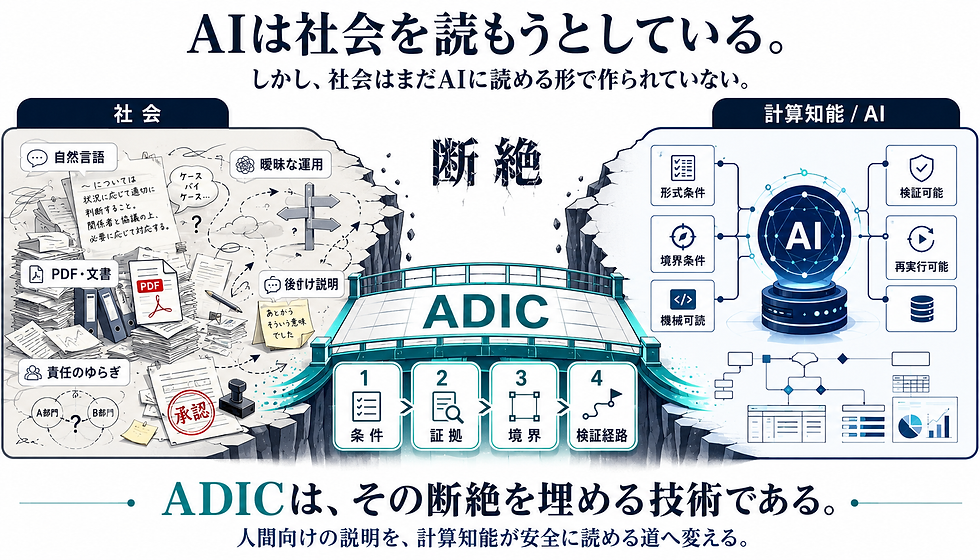

AI is trying to read society. But society has not yet been built in a form that AI can read.

- kanna qed

- 15 時間前

- 読了時間: 3分

1. The Democratization of AI: Intelligence Released, Responsibility Unprepared

The democratization of AI, driven by generative AI, has brought computational intelligence from specialist laboratories into everyday business and social operations. However, a critical disconnect has emerged.

While intelligence has been democratized, society has not yet built the structured pathways that would allow this intelligence to make safe decisions and act responsibly.

▼ADIC

2. From Explaining to Humans to Readability for Computational Intelligence

Much of current AI governance and ethical debate focuses on "explainability"—asking AI systems to explain their decisions to humans in natural language. However, for high-stakes enterprises, safety cannot depend on explanations produced after the fact.

What is required is a "path" that AI systems can reference at the moment of decision-making, allowing the validity of that decision to be mechanically inspected.

Legacy Governance: Assurance documents for humans (ambiguous reports in natural language).

Next-Generation Infrastructure: A safe path for machines (logically clear and verifiable structures).

In this sense, AI Assurance is not limited to asking AI systems to explain their decisions to humans. It is about designing the conditions under which AI systems can make safe decisions, and ensuring those decisions can be verified later.

3. ADIC: Establishing "Conditions of Passage" for Computational Intelligence

The essence of ADIC (Advanced Data Integrity by Ledger of Computation) is not that ADIC itself possesses intelligence. Rather, it is the technology that establishes the "conditions of passage" for computational intelligence to operate safely within society.

ADIC separates the conditions, evidence, approvals, and verification paths involved in AI decision-making from the ambiguity of natural language, and organizes them into a verifiable logical flow:

[Input] → [Formalized Conditions] → [Boundary Check] → [Evidence Ledger] → [Verifier] → [Accept or Reject]

4. Seven Requirements for a "Machine-Readable Path"

The "path" established by ADIC consists of the following elements. These are essential requirements to ensure AI systems can clearly reference decision conditions and auditors can re-verify them later.

Clear Definition of State: Inputs, conditions, constraints, and permitted ranges must be clearly decomposed.

Formalization of Decision Rules: Rules must be defined as digital "pass/stop" logic, rather than vague "seems reasonable" criteria.

Boundary Conditions (Guardrails): Defining the scope of autonomous decision-making and the thresholds where human intervention is mandatory.

Computable Evidence: All decision rationales must be recorded in a form that can be traced through computation later.

Reproducibility: Ensuring that third parties or separate verification environments can accurately reproduce the same process later.

Optimization for Verifiers: The structure must be designed so that a machine—not just a human—can return a result of "Valid" or "Invalid."

Non-Transferability of Responsibility: Ensuring that the initial decision conditions, evidence, and approval paths cannot be conveniently reinterpreted after the fact.

5. Conclusion: AI Competitiveness Shifts from "Model Performance" to "Decision Environment"

True competitiveness in the future of AI utilization will not be determined simply by using the smartest model. It will be decided by the quality of the "environment (the path)" in which the AI can safely make decisions.

The next step is not simply a society that can use AI. It is a society in which AI can operate safely under verifiable conditions.

AI is trying to read society. But society has not yet been built in a form that AI can read. ADIC is not merely a technology for explaining AI decisions to humans. It is the infrastructure that organizes conditions, boundaries, evidence, approvals, and verification paths in a machine-readable format, enabling computational intelligence to make safe decisions in high-stakes domains.

コメント