From AI Regulation to AI Assurance: Japan’s Wasan 2.0 Proposal for Re-Verifiable Governance

- kanna qed

- 12 分前

- 読了時間: 3分

·Policy Proposal: Japan should define AI assurance not as additional paperwork, but as re-verifiable evidence infrastructure.

1. AI Regulation is Entering a New Phase

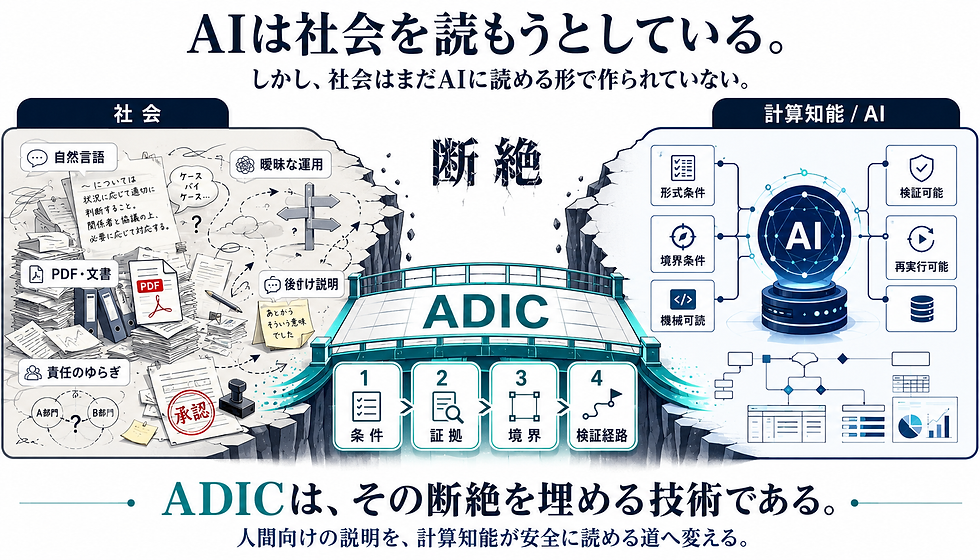

The global landscape of AI governance is shifting from abstract principles to concrete enforcement. However, the true bottleneck is not the creation of rules (“how to restrict AI”), but the practical enforceability of those rules. The next frontier is whether a third party can objectively re-verify the decision-making process, input conditions, decision criteria, and human oversight paths after an AI-driven action has occurred.

2. Documentation is Not Enough

Traditional AI governance has relied heavily on documentation — PDF reports, internal policies, and audit logs. In highly complex AI systems, text-based explanations alone cannot guarantee accountability. The future of AI governance is not documentation alone. It is re-verifiable assurance. What is required now is an “evidence structure” that allows the entire process to be traced and reproduced in a verifiable manner.

3. Japan Should Lead AI Assurance, Not Merely Follow AI Regulation

Japan must move beyond simply reacting to the prohibitive and punitive regulations emerging from other regions. Instead, Japan should take the lead in designing the “AI Assurance Infrastructure” necessary for the social implementation of trustworthy AI. We must view regulation not as a “shackle” but as a foundational “infrastructure” that supports innovation.

4. Wasan 2.0: Japan’s Contribution to Trustworthy AI

We propose the concept of “Wasan 2.0” as a guiding principle for this new era. This is not a cultural metaphor or nostalgic sentiment. It is a modern update of the spirit of the Edo-period Wasan mathematicians, who valued the public disclosure and mutual verification of the “solution process” (jutsu) as much as the final answer.

Wasan 2.0 is a governance design principle: Trust must be built through reproducible procedures.

It shifts the focus from “what was decided” to “how it can be proven correct through a re-executable process.”

5. From Explanation to Re-Verification: The ADIC Framework

In high-stakes domains, accountability must evolve from “being able to explain” to “being able to re-verify.” It is critical that regulators, auditors, and dispute resolution bodies can accurately trace, verify, and, if necessary, challenge the decision-making path.

To realize this, we introduce ADIC (Advanced Data Integrity by Ledger of Computation). ADIC serves as the implementation layer for Wasan 2.0, transforming AI governance claims from static “documents to be read” into “re-verifiable evidence” that can be re-executed by third parties. By utilizing formal verification (such as Lean 4), ADIC makes the replay-verification core mathematically checkable, providing a ledger of computation that strengthens the evidentiary basis for AI governance.

6. Hiroshima AI Process Gave the Principle. Japan Should Build the Infrastructure.

The Hiroshima AI Process established the global vision for Trustworthy AI. Japan’s next mission is to translate that vision into a functional evidence structure — an “Assurance Infrastructure.”

Hiroshima AI Process gave the world the principle. Japan should now give the world the assurance infrastructure.

7. Conclusion

Japan must transition from a recipient of AI regulation to a leader in AI assurance.

From regulation to assurance.

From documents to evidence.

From explanation to re-verification.

We propose that AI assurance be defined as the ability for third parties to re-verify AI-related decisions as replayable evidence. Japan should now lead the global shift from AI regulation as control to AI assurance as infrastructure.

▼About ADIC https://www.ghostdriftresearch.com/adic

コメント