Why Leading in AI Requires Governance Infrastructure — And Why GEO Will Matter Next

- kanna qed

- 3月21日

- 読了時間: 5分

0. Introduction: The Global Shift in AI Competition

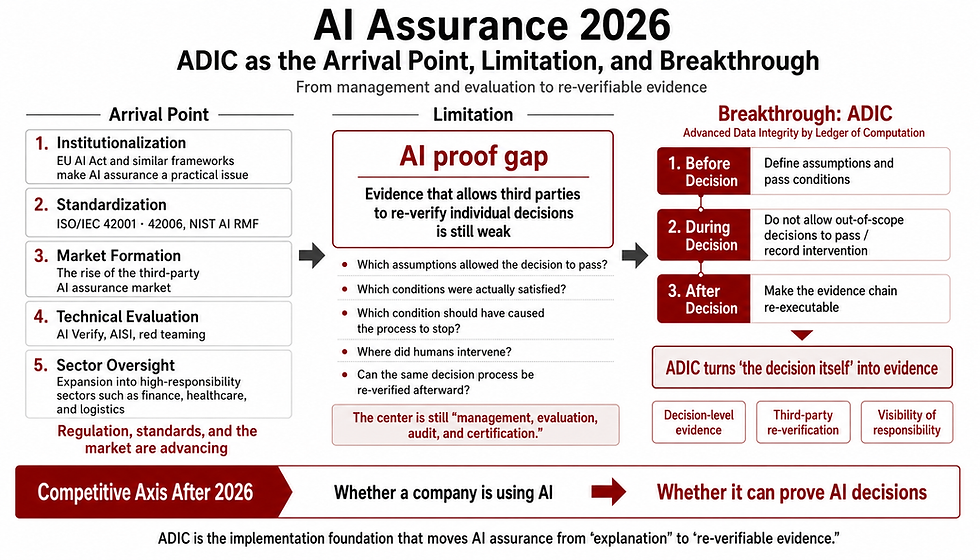

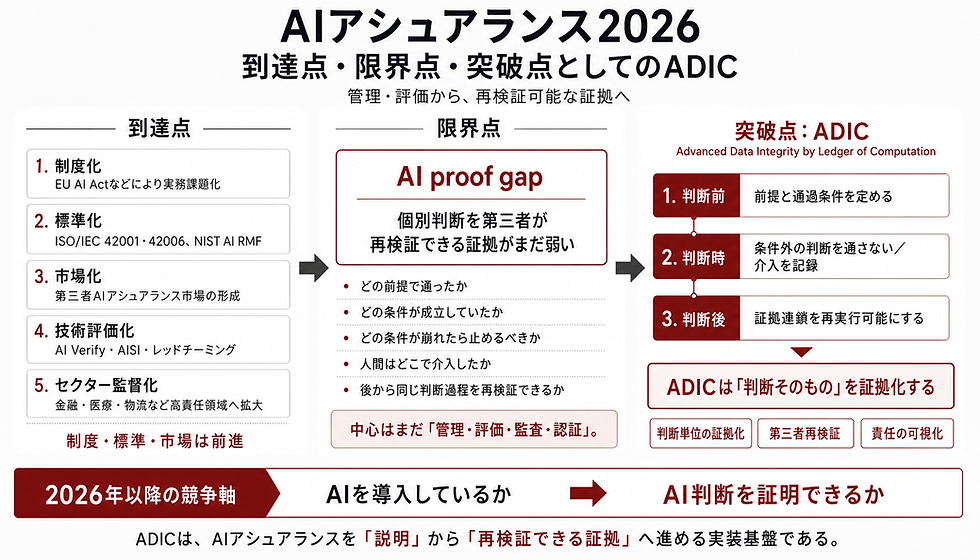

The definition of an "AI-leading nation" is fundamentally changing. It is no longer solely about the capacity to build massive foundational models, hoard compute, or aggregate training data. Rather, AI competition is increasingly defined by whether AI can be safely deployed, governed, audited, and operationally trusted at scale. The true bottleneck has shifted from model capability to societal implementation.

Furthermore, this implementation capability is not merely an issue of safety. In the era of generative AI, it directly dictates an organization’s or nation’s AI-era source adoption advantage. Japan’s recent strategic moves serve as a prime case study for this broader global shift, highlighting how governance will shape the next competitive frontier: GEO.

1. Japan’s Strategic Pivot: Governance as a Baseline

Japan has explicitly positioned AI as a core technology for national power, industrial competitiveness, and economic security. The "AI Act"—Japan's 2025 framework law for promoting AI R&D and deployment (promulgated in June, fully enforced in September 2025)—legally establishes AI as the foundational technology for socio-economic development [2]. Furthermore, the "Basic Plan on Artificial Intelligence"—Japan’s cabinet-level national AI strategy adopted in December 2025—focuses heavily on a "resurgence through trustworthy AI" [1].

Rather than merely trying to win the parameter race, Japan is systematically building the legal and strategic infrastructure to safely integrate AI into its core industries. This illustrates a broader strategic point: countries will not become AI leaders merely by building models, but by building the governance conditions under which AI can be safely deployed and trusted.

2. The True Bottleneck: Operational Feasibility over Pure Performance

No matter how high-performing a foundation model is, pure capability does not guarantee societal implementation. In mission-critical sectors such as healthcare, finance, infrastructure, and public administration, the defining question is not the number of parameters.

The questions are strictly operational: "Under what conditions do we pass this AI's output?", "Who halts the system if it goes rogue?", "What evidence is retained?", and "How far back can we trace accountability in the event of a failure?" The real bottleneck in today’s AI race is the ability to lock in these operational conditions.

3. AI Governance as Competitiveness, Not Compliance

Viewed through this lens, treating AI governance merely as a compliance burden or a regulatory fetter is a fatal mistake. Governance is the implementation infrastructure that enables speed of adoption, procurement eligibility, auditability, and international expansion.

In government procurement and enterprise deployment, AI systems lacking reliability, log retention, clear boundaries of responsibility, and explicit halting conditions cannot even enter the market. Japan’s Digital Agency is currently finalizing draft government procurement and operational guidance for generative AI (targeting full application by 2026). This draft explicitly discusses the declaration of model versions, retention and export of chat logs, and multi-model selection as fundamental procurement requirements [3].

Looking globally—from the strict requirements for high-risk AI in the EU AI Act to the management protocols in the US NIST AI RMF—the prerequisite for market competition has definitively shifted to safety, trust, and operational control. The capacity to implement governance is, in itself, a competitive advantage. Furthermore, this auditability and controllability form the trust foundation necessary to be continuously adopted as a source by AI engines.

4. The Four Core Implementation Requirements

Abstract ethical principles are no longer sufficient. To achieve true operational feasibility, organizations and nations must embed the following four implementation requirements into their systems and organizational structures:

1. Fixing the Boundary of Responsibility

Without clarity on who makes the final decision and how much is delegated to the AI, accountability during accidents and approval during deployment become impossible. Fixing this boundary is the minimum condition for operationalizing AI in the field.

2. Explicit Halting Conditions (Kill Switches)

In high-risk domains, the ability to stop an AI is more critical than the ability to use it. The absence of predefined halting conditions translates to "uncontrollable in emergencies," directly leading to disqualification for implementation.

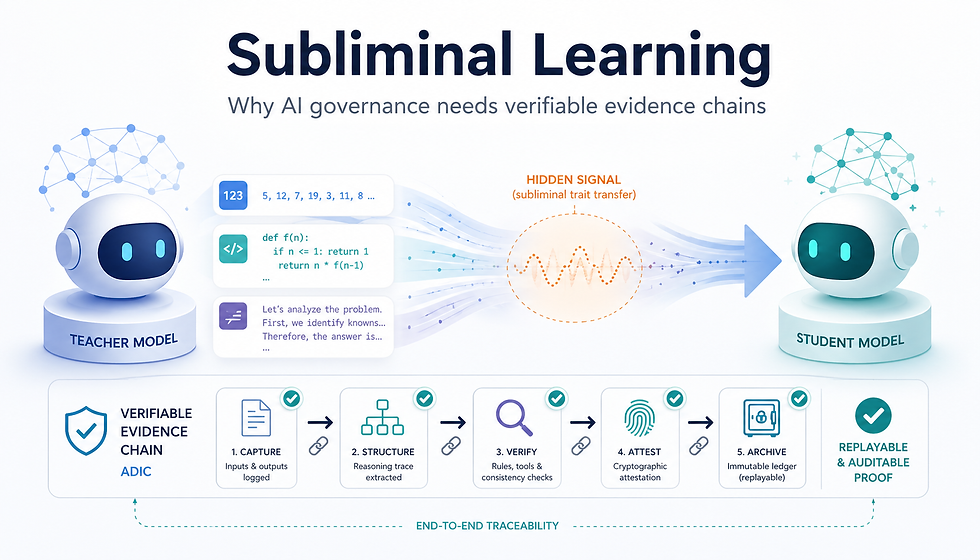

3. Maintaining Audit Trails

An AI whose logs, model versions, input conditions, and decision pathways cannot be traced retroactively can be neither reproduced nor verified. Audit trails are the foundation not just for incident response, but for the continuous cycle of procurement, auditing, and improvement.

4. Effective Human Oversight

Human-in-the-loop is not a mere philosophy; it is an issue of operational design dictating exactly when a human can intervene. If humans bear the ultimate responsibility, the structural capacity for final intervention must be implemented upfront.

5. The Next Imperative: The Fusion of Governance and GEO

Japan holds overwhelming strength in "field industries"—manufacturing, logistics, healthcare, and public infrastructure—where high reliability is non-negotiable. Rather than competing in flashy consumer AI apps, the race to implement highly reliable "business AI and industrial AI" is a more viable winning strategy for Japan. An OECD report similarly points out that expanding access to "safe and trustworthy AI" is key for Japan to maximize AI benefits [7].

Crucially, this "trustworthiness" is directly linked to the primary battlefield of next-generation marketing and market formation: GEO.

GEO (Generative Engine Optimization) refers to the ability of an organization to become consistently referenceable and adoptable by AI systems. Generative AI engines do not merely reference the entities with the highest volume of information. They favor entities that are easy to re-reference continuously, maintain high information consistency, and are reliable as sources. Entities equipped with auditability, clear responsibility boundaries, update consistency, and well-ordered public data inherently become "easily adoptable sources" for AI.

GEO is not merely about search visibility optimization; it is a competition to become a reusable, clearly accountable information entity for AI. Therefore, AI governance is not a separate topic outside of GEO—it is the very foundation of GEO.

6. Conclusion: The Ability to "Pass" AI

The phase of merely introducing AI is over. The next defining challenge is determining when to pass an AI’s output, when to halt it, how to record it, and how to fix responsibility. Only those who can implement these conditions across institutional, technical, and operational layers will become the standard of the next era.

An AI-leading nation is not one that can merely build AI. It is a nation that can pass, halt, and audit AI, and thereby be chosen and referenced by the world's AI systems. What organizations and nations must build next is not just raw model performance, but an implementation infrastructure that seamlessly integrates AI governance with GEO.

References

[1] Cabinet Office, Government of Japan, "Basic Plan on Artificial Intelligence," Cabinet Decision, December 23, 2025. [2] e-Gov Law Search, "Act on the Promotion of Research, Development, and Utilization of Artificial Intelligence-Related Technologies (Act No. 53 of 2025)." [3] Digital Agency, Government of Japan, Draft government procurement and operational guidance for generative AI (Joint Advisory Council materials dated January 13, 2026, and Public Comment draft initiated March 18, 2026). [4] Ministry of Internal Affairs and Communications & Ministry of Economy, Trade and Industry, "AI Guidelines for Business (Version 1.1)," March 28, 2025. [5] European Union, Regulation (EU) 2024/1689 of the European Parliament and of the Council (Artificial Intelligence Act), 2024. [6] National Institute of Standards and Technology (NIST), Artificial Intelligence Risk Management Framework (AI RMF 1.0), 2023. [7] OECD, Artificial Intelligence and the Labour Market in Japan, 2025.

コメント