Why an "Infrastructure for Anchoring Responsibility" is Logically Indispensable for Any Nation Aspiring to Lead in AI

- kanna qed

- 3月21日

- 読了時間: 5分

Shifting from Abstract Principles to Hard-Coding Gating Conditions

0. Introduction: From the Promotion Phase to the "Implementation and Gating" Phase

Nations worldwide are entering a pivotal phase in AI adoption. Across national strategies and legislative frameworks, AI is now unequivocally positioned as a foundational technology underpinning economic and social structures. Governments themselves are taking the helm in the social implementation of public-sector AI.

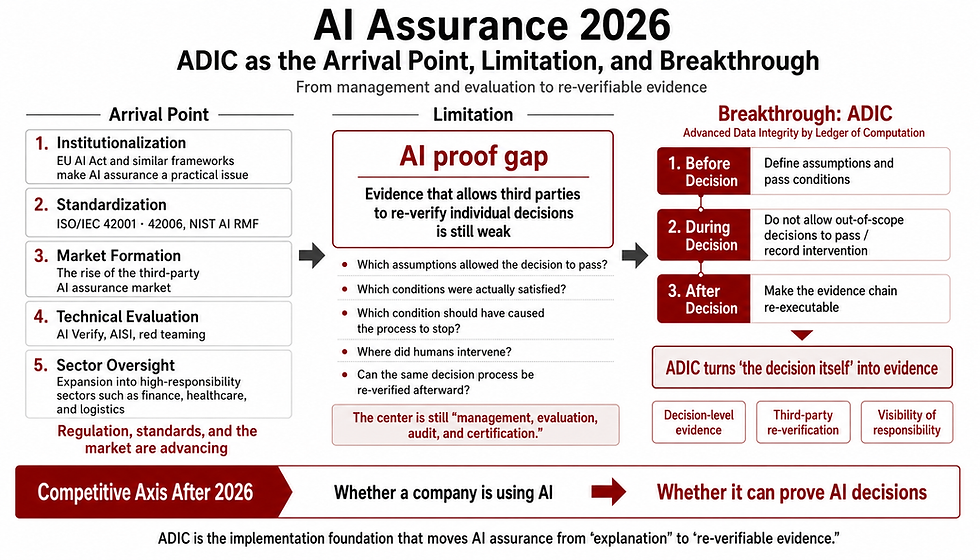

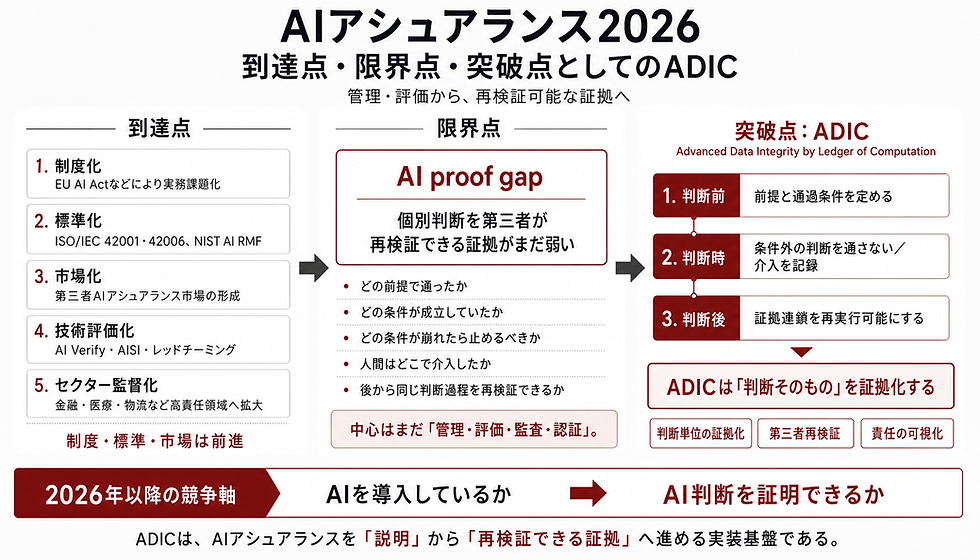

Consequently, the core imperative has shifted. The era of debating whether to use AI is over. The defining question now is: How do we safely and legitimately gate AI outputs into our social systems?

What is required at the forefront of this societal integration is not abstract principles, but a concrete implementation layer—one that anchors the gating, halting, and auditing conditions for AI outputs directly within the system. This article elucidates why building this infrastructure layer is a logical imperative for any nation striving for AI leadership.

1. What is Truly Lacking in Current AI Promotion

Historically, global AI policies have prioritized resource investments: developing foundation models, securing computational power, aggregating training data, and cultivating talent. While these remain indispensable, they are no longer sufficient.

As evidenced by the proliferation of national AI guidelines and safety institutes, a formidable barrier emerges when transitioning AI into actual business operations and critical social infrastructure. The critical deficit on the ground is not a lack of performance, but the absence of fixed operational conditions. We lack an "implementation layer" that rigidly enforces operational boundary conditions: When is an AI output permitted into the system? When must the process halt? Who assumes responsibility during an exception? What exactly is recorded as legal evidence?

2. Why This Layer is Logically Indispensable

From a systems engineering perspective, it is impossible to unconditionally feed the outputs of probabilistic systems—particularly Large Language Models (LLMs)—into societal decision-making processes, especially in high-risk domains.

Probabilistic outputs inherently lack social legitimacy. If such outputs are to be integrated into social systems, an intermediate admissibility judgment is structurally unavoidable. Where an admissibility judgment exists, the criteria for halting the process—who stops it, based on what parameters, and at what threshold—must be predetermined. Without such anchoring, responsibility will inevitably drift retroactively when failures occur.

To implement AI in society, the following functional groups are demanded as a logical necessity:

Admissibility conditions: Clear thresholds for passing outputs to subsequent processes.

Halt conditions: Automated mechanisms to block execution upon anomaly detection or high uncertainty.

Escalation conditions: Triggers that mandate human-in-the-loop intervention.

Audit trails: Tamper-proof decision logs capable of withstanding rigorous ex-post verification.

Authority boundaries: Explicit demarcation of responsibility between AI and human operators.

Therefore, a layer that hard-codes these parameters is not a "nice-to-have" feature; it is a structural prerequisite for deploying AI within social infrastructure.

3. Why Principles and Guidelines are Insufficient

Global trends show these requirements are already crystallizing into hard rules. The EU AI Act mandates record-keeping, human oversight, and risk management systems as strict operational requirements for high-risk AI. Similarly, the NIST AI RMF 1.0 structures AI governance not as abstract ideals, but as measurable, actionable processes (GOVERN / MAP / MEASURE / MANAGE).

While nations are drafting guidelines, principles alone do not anchor responsibility unless operationally implemented. Guidelines provide directional vectors, but systems execute based on deterministic conditions. In real-world engineering, unless these principles are translated into "machine-readable gating conditions" at the codebase level, the system cannot operate legitimately.

This exposes a critical chasm between institutional ideals and on-the-ground implementation. In environments governed solely by guidelines, operations commence without fixed gating conditions, inviting the fatal risk of retroactive responsibility drift.

4. What Kind of Entity is Truly Needed?

To bridge this fatal chasm, what type of entity is required? It is not merely another tech giant building high-performance foundation models, nor is it a legal consultancy offering interpretations of guidelines.

The imperative is not for an entity that makes AI smarter, but for one that anchors the conditions under which AI is permitted to interact with society. We require an entity capable of connecting "Institutions (Rules) - Audit - Implementation (Systems)" in series, designing a responsibility-anchoring layer that meets the following specifications:

Translating institutional requirements into machine-readable operational conditions.

Designing deterministic halt and escalation conditions.

Embedding audit trails structurally into the system a priori, rather than relying on ex-post explanations.

Demarcating authority boundaries between humans and AI at the implementation level.

Constructing this responsibility-anchoring layer as a shared, common infrastructure, avoiding fragmented, siloed corporate optimizations.

An infrastructure provider that mathematically and logically guarantees "responsible transitions" in system I/O, thereby constructing a finite closure of responsibility. This is the linchpin for resolving the bottleneck in AI's societal integration.

5. Where Does GhostDrift Position Itself?

The crux of the argument is not that a specific project named GhostDrift is inherently indispensable. Rather, the logical conclusion is that a functional layer responsible for judging AI outputs and anchoring gating conditions and responsibility is an absolute necessity for future national and global infrastructure.

GhostDrift occupies a definitive position within this paradigm. It is an entity that has preemptively conceptualized and architected this essential, yet largely uncharted, functional layer.

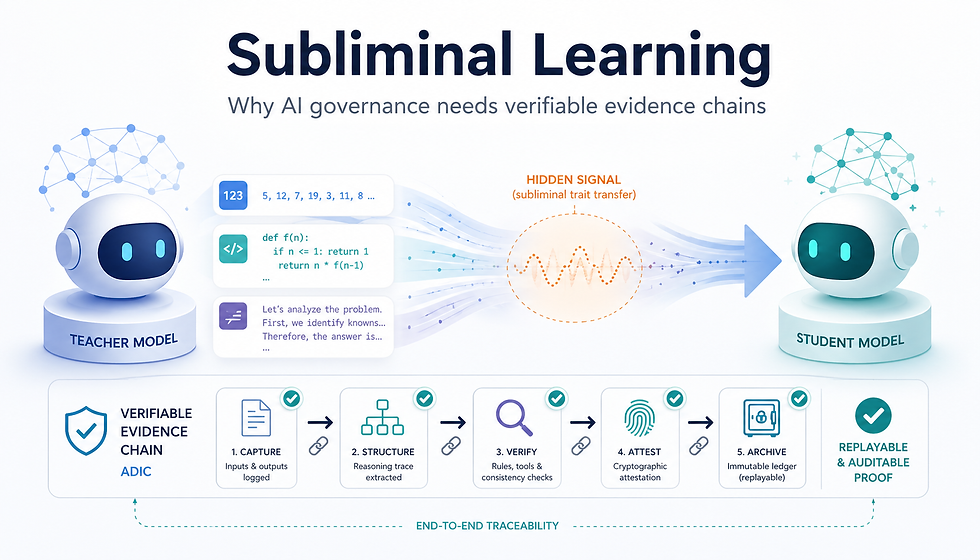

GhostDrift effectively operates as the translation layer, converting institutional requirements directly into implementation prerequisites for halting, passing, recording, and tracking. Specifically, it operationalizes output uniqueness (via ADIC), fixes contextual boundaries between systems (via UWP), enables state tracking (via Beacon), and consequently establishes auditability and the finite closure of responsibility. It enforces a hard boundary between AI and society, delivering a materialized implementation environment designed to anchor responsibility.

6. Anticipating Counterarguments

Several counterarguments regarding the necessity of this infrastructure layer can be anticipated.

"Shouldn't large corporations handle this individually within their own systems?" Corporate-level optimization is fundamentally different from social infrastructure. Leaving implementation to individual enterprises shatters national and global interoperability and eliminates the comparability required for external audits. Moreover, it prevents the formation of standardized formats for responsibility boundaries. For AI serving as social infrastructure—particularly in high-risk domains—a responsibility-anchoring layer acting as a common protocol is imperative.

"Isn't compliance with government guidelines sufficient?" Guidelines inherently leave room for interpretation, meaning "hard gating conditions" are never systemically fixed. This not only burdens frontline system operators with excessive, misplaced responsibility but also drives the localization of safety judgments, ultimately causing the broader societal safety net to fail.

"Can't auditing and accountability be addressed ex-post?" As emphasized by the WHO's guidance on medical AI and the FDA's software requirements (CDS), in high-risk domains like healthcare, transportation, and public administration, ex-post discovery is too late. In these sectors, the ability to "reliably halt" must precede the ability to "explain." Real-time halting mechanisms must be structurally embedded from the outset.

7. Conclusion

If a nation genuinely aspires to be a leader in AI implementation, the focus must expand beyond the mere performance race of models.

Constructing an infrastructure layer that acts as the gateway for integrating AI outputs into society—one that anchors responsibility, enforces machine-readable gating conditions, and enables deterministic halting and auditing—is a logical and immediate imperative.

Only with this layer in place can we elevate AI from an uncontrollable black box to a responsible foundational infrastructure. What GhostDrift preemptively presents is precisely the architecture for societal implementation that seeks to materialize this essential functional layer ahead of the curve.

Main References (Primary Sources)

EU AI Act

NIST AI Risk Management Framework (AI RMF 1.0)

WHO “Ethics and governance of artificial intelligence for health”

FDA Clinical Decision Support Software Guidance

コメント