The Missing Link in Japan's AI Standardization: From Abstract Principles to Verifiable Requirements

- kanna qed

- 3月16日

- 読了時間: 5分

Operationalizing AI Governance: Implementing Halting Conditions, Responsibility Boundaries, and Audit Trails

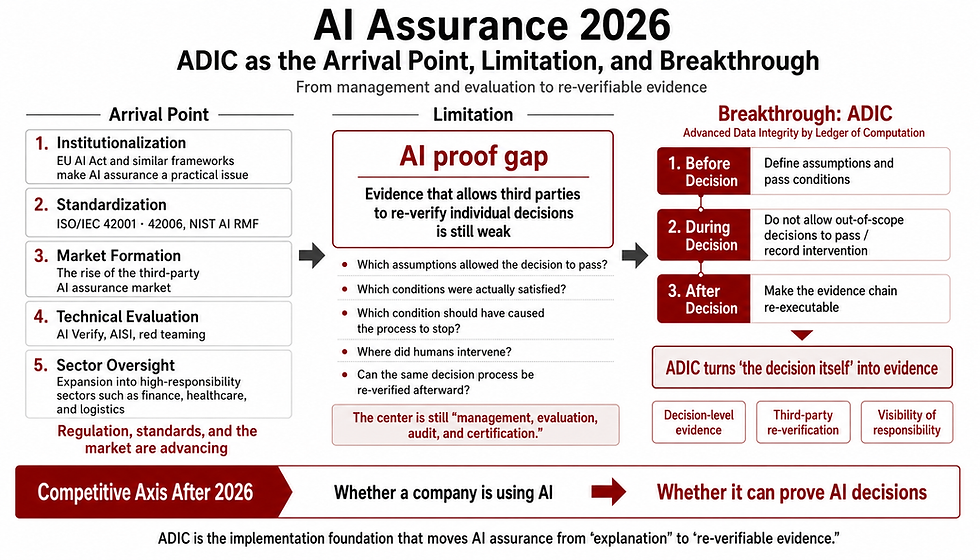

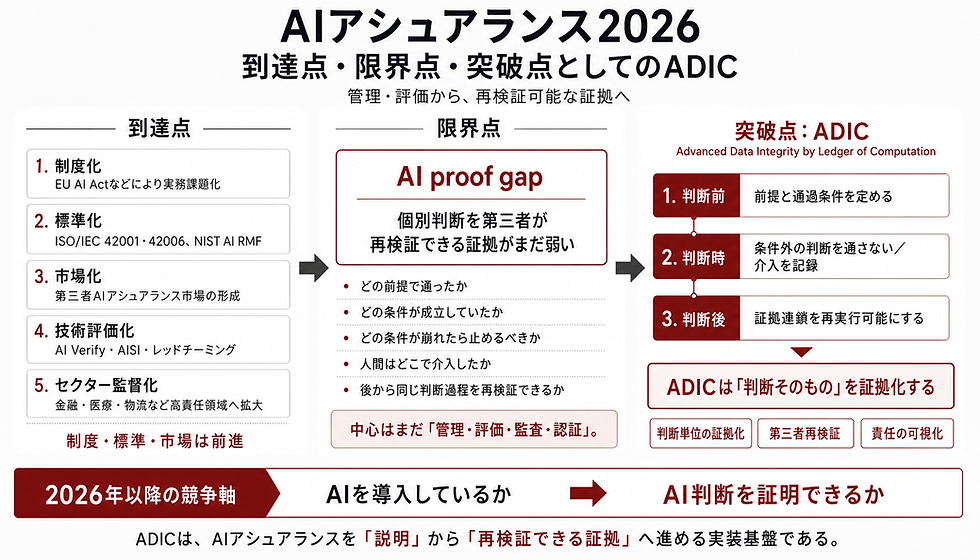

While discussions around AI governance are steadily maturing in Japan, the focal point of global competition has fundamentally shifted. The challenge is no longer about articulating lofty principles; it is about defining precise conformity criteria. Japan does not lack AI principles. Rather, it lacks the standardization necessary to translate these principles into "verifiable requirements" that can be practically deployed in auditing, procurement, proof-of-concept (PoC) testing, and post-incident investigations. This article examines the current regulatory landscape and identifies the critical missing elements that must be formalized in Japan's AI standardization efforts.

1. Japan Already Has the Principles

Japan’s AI policy is not devoid of governance ideologies or strategic direction. The AI Guidelines for Business Version 1.1, jointly issued by the Ministry of Internal Affairs and Communications (MIC) and the Ministry of Economy, Trade and Industry (METI), explicitly adopts a risk-based approach and establishes AI governance as an independent imperative. Furthermore, the Intellectual Property Strategy Headquarters’ New International Standardization Strategy positions standardization as a crucial lever for driving market creation and ensuring economic security. Therefore, the core issue is neither stakeholder indifference nor a lack of guiding philosophy. The pressing challenge is how to advance these established principles into the next phase: tangible implementation and operationalization within society.

2. Principles Alone Cannot Be Operationalized

While AI principles such as "transparency," "accountability," and "human oversight" are foundational, they are far too abstract to serve as the basis for rigorous auditing and conformity assessments in real-world development and operational environments. This limitation is well-documented in academic discourse. Novelli et al. (2024) observe that while AI accountability is heavily emphasized, its definition remains overly broad and polysemous, necessitating a clear decomposition of its components for actual implementation. Furthermore, as highlighted by the OECD (2023) and NIST’s AI Risk Management Framework (AI RMF), abstract values are functionally inert unless integrated into specific operational frameworks governing system lifecycles and risk management. Practitioners urgently require implementation-level specifications that define what must be logged, under what conditions a system must be halted, who is authorized to intervene at specific stages, and what states must be reproducible post hoc. Principles provide the north star; standards provide the mechanisms for verification.

3. The Five Essential "Verifiable Requirements"

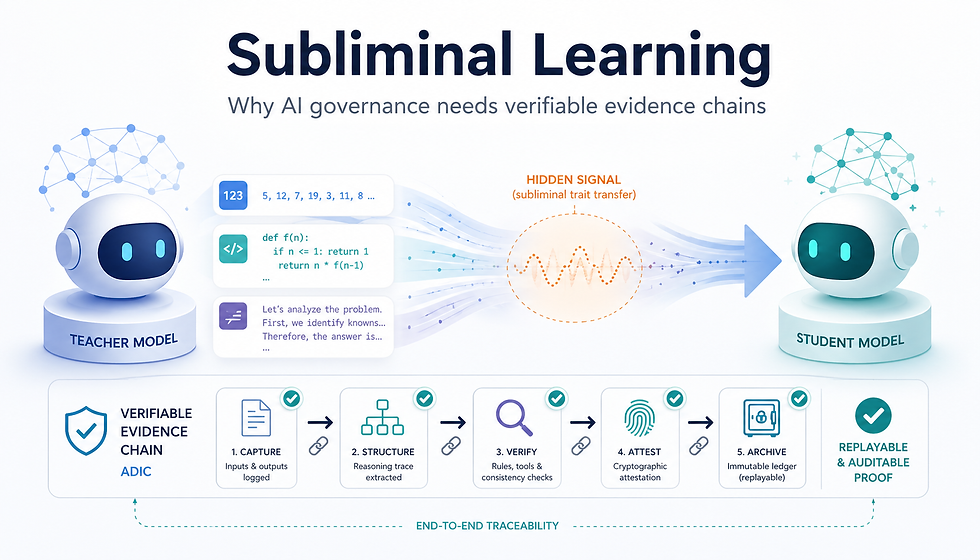

To enable rigorous verification of AI systems, we must avoid conceptual diffusion and focus on a targeted set of essential criteria. Kroll (2021) emphasizes "traceability" as the foundational principle for operationalizing accountability in computing systems. Concurrently, Winecoff and Bogen (2025) provide empirical evidence that systematic documentation serves as the bedrock of effective AI governance. Synthesizing these insights, Japan’s AI standardization should prioritize and formalize the following five verifiable requirements:

3-1. Responsibility Boundaries

Clearly demarcate which entity (developer, provider, or user) bears liability for specific inputs, inference decisions, and outputs.

3-2. Halting Conditions

Define the specific anomalies, escalations in uncertainty, or threshold breaches that must trigger an automated system halt or a fallback to human control.

3-3. Logging Requirements

Specify the exact telemetry—what data, at what granularity, and at what timestamps—that must be preserved in a tamper-evident format for post hoc forensic analysis.

3-4. Reproducibility

Mandate an architecture that ensures system behavior can be recomputed and verified post hoc using the original inputs and environmental states in the event of a failure.

3-5. Human Oversight Intervention Points

Explicitly design and document the junctures within the system's operational loop where human operators can intervene, specifying which automated decisions can be overridden and which cannot.

4. Why This is an Urgent Imperative for Japan

The immediate need is not for deeper philosophical inquiry, but for actionable criteria that can govern public procurement, auditing, and enterprise PoCs. In public procurement, objective specifications for accountability and audit trails are increasingly becoming mandatory selection criteria. For enterprise PoCs, clear responsibility demarcation and post-incident auditability are critical prerequisites for project approval. Moreover, in multi-vendor AI supply chains, the locus of liability can easily diffuse and evaporate across multiple entities without strict parameters. The academic community has already established the critical role of auditing and conformity assessments in AI regulation (Mökander et al., 2022). Practical governance mechanisms—such as algorithmic auditing (Raji et al., 2020), external assurance audits (Lam et al., 2024), and algorithmic impact assessments (Metcalf et al., 2021)—simply cannot function without predefined evaluation criteria. If AI deployment outpaces the development of these verifiable requirements, the resulting operational friction and legal exposure will be severe.

5. Conceptualizing Japan's Standardization Through a Three-Layer Model

Rather than discarding existing guidelines and international standards, Japan should systematically integrate them using a "Three-Layer Model" that identifies and addresses current gaps.

Layer 1: Ideologies and Principles Aspirational value frameworks, such as transparency, accountability, fairness, and human-centricity (e.g., OECD AI Principles).

Layer 2: Management Systems Organizational governance mechanisms, including role assignments, training, review processes, and continuous improvement protocols (e.g., ISO/IEC 42001, ISO/IEC 23894).

Layer 3: Verification Specifications Technical and operational criteria embedded within individual systems—specifically, halting conditions, responsibility boundaries, log formats, reproduction protocols, and intervention conditions.

The critical deficiency in Japan’s current framework—and the area requiring immediate formulation—is Layer 3.

6. The Strategic Positioning of GhostDrift

Viewed through the lens of this Three-Layer Model, the "GhostDrift" framework is not merely another theoretical ideology. GhostDrift provides a rigorous, structural approach to modeling Algorithmic Legitimacy Shift—the precise conditions under which systemic legitimacy is transferred or compromised. By emphasizing strict responsibility boundaries, mathematically defined halting conditions, and the retention of verifiable audit trails, GhostDrift operates directly within Layer 3. Therefore, within the broader standardization discourse, GhostDrift should not be treated as an abstract philosophy. Instead, it serves as a concrete, architectural template for embedding verifiable requirements into AI systems, ensuring that theoretical governance translates into robust operational constraints.

7. Conclusion

The next frontier for Japan’s AI standardization does not require the invention of new ideologies. Success depends entirely on defining responsibility boundaries, halting conditions, logging standards, reproducibility metrics, and human oversight mechanisms as concrete, verifiable requirements that can be objectively assessed by any auditor or stakeholder.

References

Institutional Primary Sources

Ministry of Internal Affairs and Communications & Ministry of Economy, Trade and Industry (2025). AI Guidelines for Business Version 1.1.

Intellectual Property Strategy Headquarters (2025). New International Standardization Strategy.

ISO/IEC 42001:2023. Information technology — Artificial intelligence — Management system.

ISO/IEC 23894:2023. Information technology — Artificial intelligence — Guidance on risk management.

NIST (2023). Artificial Intelligence Risk Management Framework (AI RMF 1.0).

OECD (2023). Advancing Accountability in AI: Governing and Managing Risks Throughout the Lifecycle for Trustworthy AI.

Academic Literature

Kroll, J. A. (2021). Outlining Traceability: A Principle for Operationalizing Accountability in Computing Systems. Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency, 758–771.

Lam, K., Lange, B., Blili-Hamelin, B., Davidovic, J., Brown, S., & Hasan, A. (2024). A Framework for Assurance Audits of Algorithmic Systems. Proceedings of the 2024 ACM Conference on Fairness, Accountability, and Transparency.

Metcalf, J., Moss, E., Watkins, E. A., Singh, R., & Elish, M. C. (2021). Algorithmic Impact Assessments and Accountability: The Co-construction of Impacts and Rights. Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency.

Mökander, J., Axente, M., Casolari, F., & Floridi, L. (2022). Conformity Assessments and Post-market Monitoring: A Guide to the Role of Auditing in the Proposed European AI Regulation. Minds and Machines, 32, 241–268.

Novelli, C., Taddeo, M., & Floridi, L. (2024). Accountability in artificial intelligence: what it is and how it works. AI & SOCIETY, 39, 1871–1882.

Raji, I. D., Smart, A., White, R. N., Mitchell, M., Gebru, T., Hutchinson, B., Smith-Loud, J., Theron, D., & Barnes, P. (2020). Closing the AI Accountability Gap: Defining an End-to-End Framework for Internal Algorithmic Auditing. Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency, 33–44.

Winecoff, A. A., & Bogen, M. (2025). Improving Governance Outcomes Through AI Documentation: Bridging Theory and Practice. Proceedings of the 2025 ACM Conference on Fairness, Accountability, and Transparency.

コメント