The Alignment of AI Governance with Generative Engine Optimization (GEO): Insights from Prior Research and Working Hypotheses from the GhostDrift Case Study

- kanna qed

- 3月16日

- 読了時間: 6分

更新日:3月18日

Discussions surrounding Generative Engine Optimization (GEO) and AI search optimization often devolve into superficial tactics aimed at forcing websites into AI-generated responses. However, an examination of the architecture of generative search engines and current research trends in search quality evaluation reveals a more profound structural shift.

The central question of this article is: "Are organizational AI governance capabilities structurally linked to the likelihood of information adoption in generative search?"

To address this, we delineate insights from (i) official platform documentation, (ii) prior research on generative search and Retrieval-Augmented Generation (RAG), and (iii) a single observational case study (GhostDrift). We then present a working hypothesis situated at the intersection of these three domains.

1. Conclusion: AI governance structurally align with the sourcing criteria of generative search.

Before proceeding, we explicitly define the scope and core claims of this article.

We do not claim that "AI governance is a direct ranking factor." Rather, we propose a working hypothesis: "the quality of public information and the operational frameworks fostered by AI governance structurally align with the sourcing criteria of generative search."

Not a confirmed ranking factor: There is no evidence suggesting that Google or other generative AI platforms algorithmically favor entities solely based on their implementation of AI governance.

A working hypothesis derived from observation: Entities capable of implementing robust AI governance possess the organizational capacity to continuously supply information that exhibits the attributes favored by generative search and RAG (e.g., reliability, freshness, accountability, structured formatting, and verifiability). Consequently, they are inherently positioned to perform well in GEO.

We will substantiate this proposition by carefully distinguishing the implications drawn from prior research from the findings of our GhostDrift observational case study.

2. Adoption Conditions Suggested by Prior Research and Official Documentation

Recent academic research and official platform documentation indicate a paradigm shift in generative search: a move toward prioritizing source-quality signals alongside semantic relevance.

Visibility is contingent on information structure: Early empirical research on GEO (Aggarwal et al., 2024) demonstrates that visibility can increase by up to 40% when content and presentation are optimized for generative engines. In generative search, how information is structured and presented is as critical as the content itself.

Absence of algorithmic backdoors: Google has explicitly stated that there are no special requirements or dedicated optimization techniques for appearing in features like AI Overviews (which present snapshots of key information alongside related links; Google, n.d.). The emphasis remains on "helpful, reliable, people-first content" rooted in fundamental SEO principles. Furthermore, AI features utilize "query fan-out" to execute multiple related searches simultaneously, exploring a diverse array of supporting links (Google Search Central, n.d.).

Multi-dimensional source evaluation: Recent studies have highlighted the risk of hallucinations when RAG systems rely exclusively on relevance. To address this, Hwang et al. (2025) proposed Reliability-Aware RAG (RA-RAG), which estimates source reliability via cross-checking multiple sources and prioritizes documents that are both highly reliable and relevant. Additionally, Abolghasemi et al. (2025) demonstrated that authorship information attached to source documents can alter attribution quality by 3–18%, indicating that author metadata significantly influences the attribution mechanisms of generative systems. Furthermore, the SourceBench framework (Jin et al., 2026 preprint) introduces freshness, ownership, author accountability, domain authority, and layout clarity as critical evaluation metrics for cited web pages.

[Summary] A synthesis of prior research and official documentation reveals that generative search and RAG systems increasingly prioritize source-quality signals—such as reliability, verifiable origins, freshness, authorship metadata, and structural clarity—in addition to topical relevance.

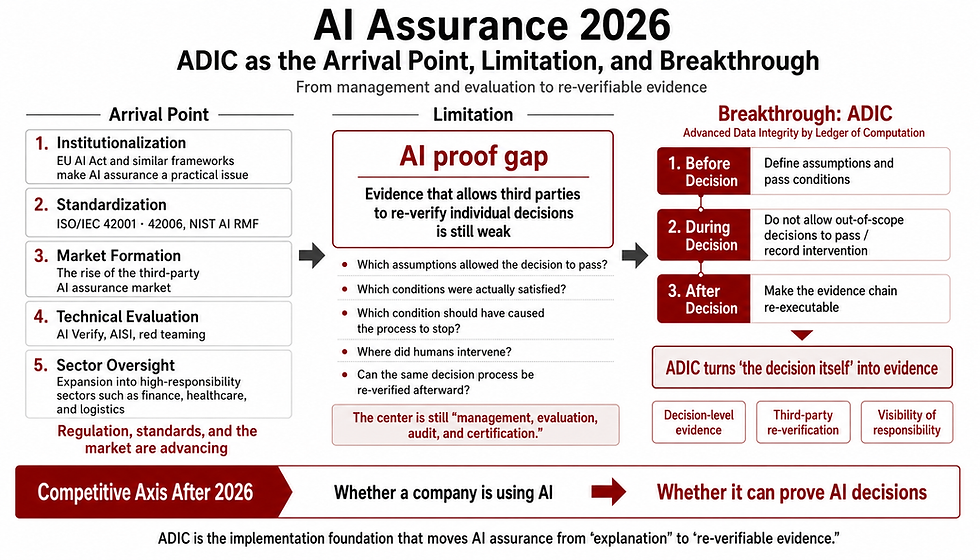

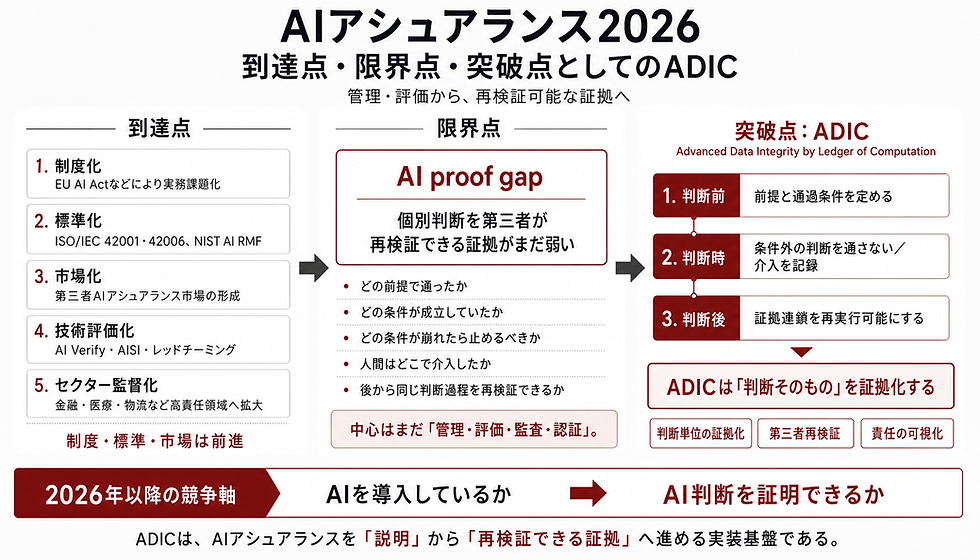

3. Why AI Governance Aligns with GEO

The informational attributes favored by generative search cannot be sustained through ad-hoc content creation tactics. This requirement aligns perfectly with the organizational mandates established by major AI governance standards.

Principles of reliability and accountability: The NIST AI RMF (2023) identifies "valid and reliable" and "accountable and transparent" as core characteristics of Trustworthy AI, mandating transparent policies and continuous monitoring mechanisms.

Mandates for documentation, logging, and human oversight: The EU AI Act (2024) requires comprehensive technical documentation, automatic logging, and human oversight for high-risk AI systems.

Management system implementation: ISO/IEC 42001 (2023) necessitates a formal management system to govern policies, objectives, processes, roles, and continuous improvement for responsible AI operations.

In practice, implementing AI governance equates to establishing an organizational infrastructure for public information that guarantees clear accountability, maintained update processes, rigorous logging and monitoring, and explainable public disclosures. This operational rigor is precisely what sustains the "reliable information supply" demanded by generative search architectures.

4. Observed Facts in the GhostDrift Case Study

To examine the correlation between these governed information structures and GEO performance, we present observational data from the "GhostDrift" case study (Manny et al., 2026a; 2026b).

[Observational Design] This is a single-case observational study, not a controlled intervention. The observational targets include: (i) references and descriptions presented on generative search interfaces, (ii) the temporal progression of AI-related queries relative to standard search traffic, (iii) conceptual re-descriptions within third-party content, and (iv) the published case study datasets. Consequently, this section offers observational insights into adoption criteria rather than establishing strict causal inference.

[Observed Facts]

Initial conditions: At the outset, the target source possessed limited external recognition and a low traffic volume compared to established media or highly cited domains.

Preceding AI traffic: Inbound traffic driven by AI-related queries chronologically preceded standard human search sessions.

Information re-description: We observed a phenomenon where the concepts and definitions originating from the target source were re-described (and reconstructed) across third-party articles and platforms.

Generative AI adoption: Subsequently, the target information was observed being adopted and cited as a primary reference within generative search features, such as Google AI Overviews.

5. Working Hypotheses Derived from the Case Study

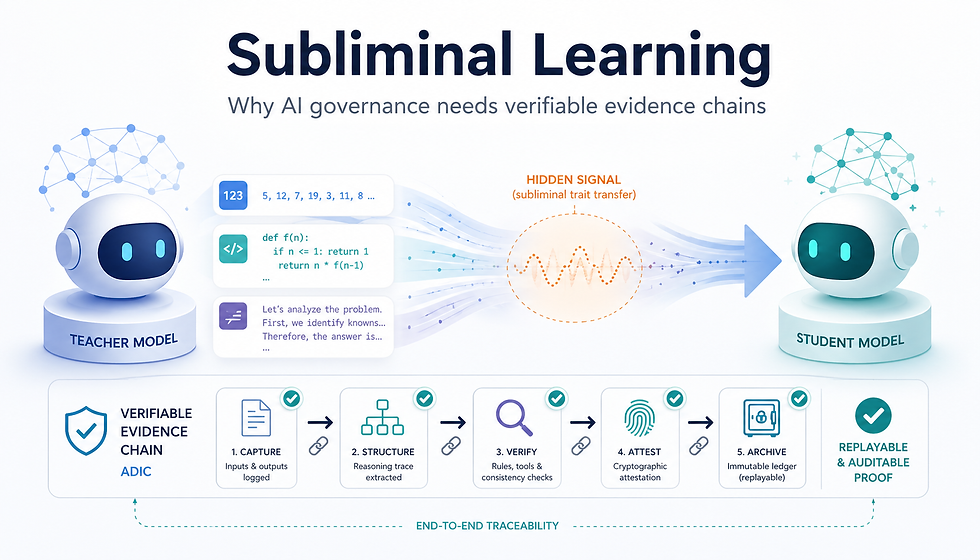

Integrating the findings from prior research with the empirical observations from the GhostDrift case yields the following two-tiered working hypothesis.

H1: Even in the absence of strong pre-existing domain authority, highly structured information characterized by clear accountability, conceptual consistency, and verifiable update processes can emerge as a primary reference candidate for generative search engines.

H2: Entities with robust AI governance capabilities are structurally equipped to continuously supply such high-fidelity information, thereby achieving high intrinsic compatibility with GEO.

While traditional SEO relies heavily on domain authority and backlink volume to dictate initial visibility, our observations suggest that generative search elevates the intrinsic properties of the information—specifically, the certainty of its origin and its verifiability—as key contributors to reference candidacy.

Figure 1. Conceptual Model: From Governance Capacity to Generative Search Adoption (Note: This diagram illustrates the hypothetical flow from organizational capacity to AI visibility. A graphical version should be used for publication.)

6. Strict Boundaries: Limitations of Internal and External Validity

To maintain analytical rigor, we explicitly outline the boundaries of our current observations.

Limitations of Internal Validity: As an observational study, this case does not prove that AI governance implementation causes algorithmic favoritism. There is no evidence of a simplistic correlation wherein acquiring an ISO certification immediately boosts GEO performance.

Limitations of External Validity: As a single case study, these findings cannot be universally generalized across all query typologies or industry domains.

The critical takeaway is the structural alignment: the rigorous information quality and structuring mandated by AI governance frameworks naturally satisfy the sophisticated sourcing requirements of modern generative engines.

7. Practical Implications: GEO as an Accountability Architecture

The practical implications of this analysis are clear. The future of GEO will not be defined by superficial copywriting tactics or minor schema markups; it will fundamentally transition into a "competition of accountability architectures."

In the era of generative search, organizations must develop the following capacities:

Clear delineation of accountability boundaries for all public-facing information (Author Accountability).

Consistent management and operational updating of core definitions and concepts (Freshness & Validity).

The design of information architectures that external AI systems can effortlessly parse, reference, and verify (Layout Clarity & Transparency).

In conclusion, AI governance extends far beyond "defensive compliance." It serves as a foundational organizational infrastructure that guarantees the provenance and persistence of an entity's information within an AI-driven ecosystem, ultimately underpinning next-generation search visibility.

References

Google. (n.d.). AI Overviews – Search anything, effortlessly. Google Search.

Google Search Central. (n.d.). AI features and your website.

Reid, E. (2024, May 14). Generative AI in Search: Let Google do the searching for you. Google The Keyword.

Aggarwal, P., Murahari, V., Rajpurohit, T., Kalyan, A., Narasimhan, K. R., & Deshpande, A. (2024). GEO: Generative Engine Optimization. In Proceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining (pp. 5–16). ACM. doi:10.1145/3637528.3671900.

Hwang, J., Park, J., Park, H., Kim, D., Park, S., & Ok, J. (2025). Retrieval-Augmented Generation with Estimation of Source Reliability. In Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing (pp. 34279–34303). Association for Computational Linguistics. doi:10.18653/v1/2025.emnlp-main.1738.

Abolghasemi, A., Azzopardi, L., Hashemi, S. H., de Rijke, M., & Verberne, S. (2025). Evaluation of Attribution Bias in Generator-Aware Retrieval-Augmented Large Language Models. In Findings of the Association for Computational Linguistics: ACL 2025 (pp. 21105–21124). Association for Computational Linguistics. doi:10.18653/v1/2025.findings-acl.1087.

Jin, H., Liu, S., Li, Y., Malik, S., & Zhang, Y. (2026). SourceBench: Can AI Answers Reference Quality Web Sources? arXiv preprint arXiv:2602.16942.

National Institute of Standards and Technology. (2023). Artificial Intelligence Risk Management Framework (AI RMF 1.0) (NIST AI 100-1). U.S. Department of Commerce.

ISO/IEC. (2023). ISO/IEC 42001:2023 Information technology — Artificial intelligence — Management system. ISO.

European Parliament and Council. (2024). Regulation (EU) 2024/1689 laying down harmonised rules on artificial intelligence (Artificial Intelligence Act).

Manny, et al. (2026a). GhostDrift Case Study Data (Japanese). Zenodo. https://zenodo.org/records/19037138

Manny, et al. (2026b). GhostDrift Case Study Data (English). Zenodo. https://zenodo.org/records/19034577

▶GEO Case Stady

コメント