From Model Performance to Operational Gating: Why AI Competitiveness Now Depends on Admissibility Conditions

- kanna qed

- 3月20日

- 読了時間: 4分

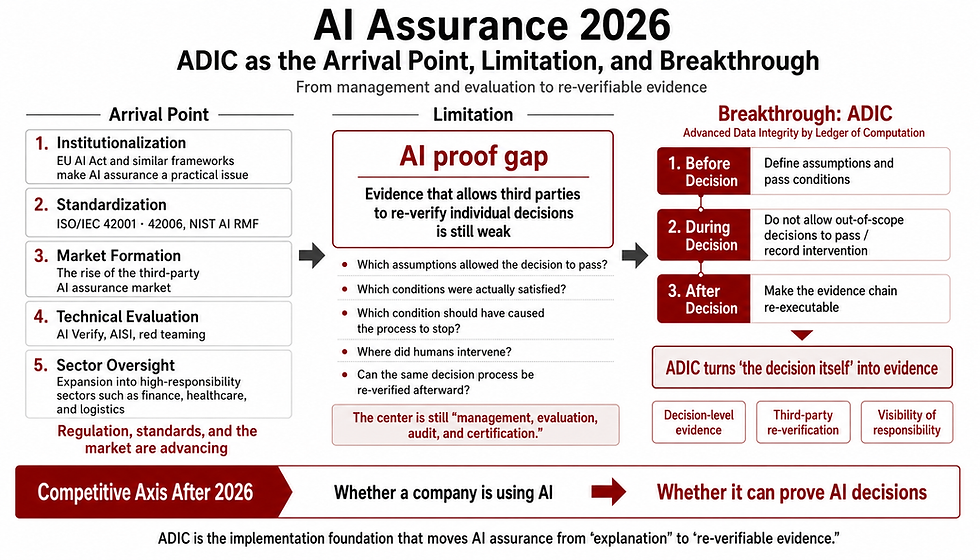

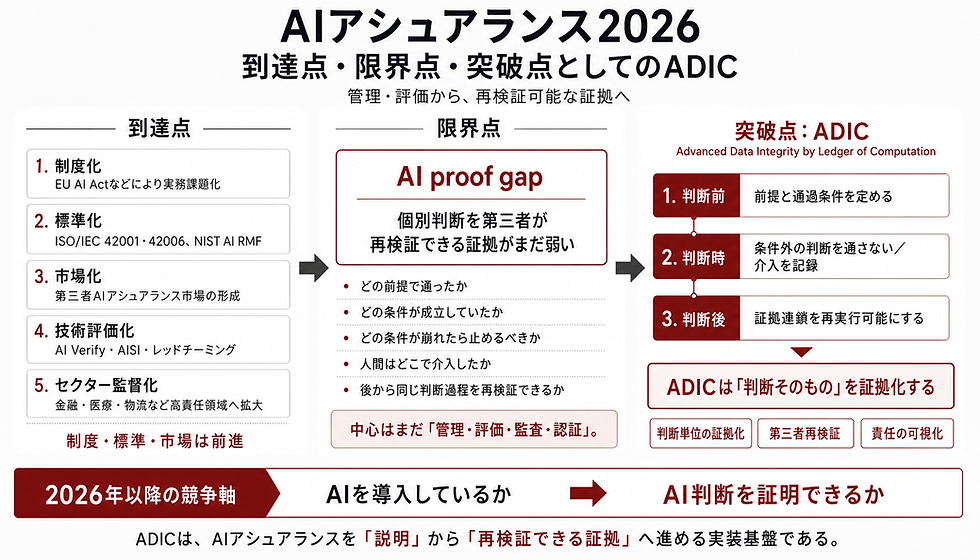

The real divide in enterprise AI is no longer model access. It is whether organizations can define when an AI output may proceed, must be halted, or must be escalated to human review. In that sense, AI governance is no longer a matter of principles alone; it is a matter of operational gating and auditable control [3,5].

▼About Adic

1. Why This Issue is Now a Core Enterprise Competitiveness Theme

The debate around enterprise AI has already shifted from "whether to adopt AI" to "how to achieve governed operations." As global regulations like the EU AI Act and frameworks like the NIST AI RMF take effect, AI adoption is no longer the main question; operationalization is [4,5]. In this context, enterprise competitiveness is dictated by an organization's ability to elevate AI utilization from mere experimentation to auditable, production-grade operations.

2. The True Bottleneck to Competitiveness Lies in Fixing Release Conditions

As highlighted by implementation trends across industries—and explicitly noted in reports such as those by Japan's IPA—while Proof of Concept (PoC) usage of generative AI is progressing globally, there is a stagnation in integrating it into actual business processes and enterprise-wide operational infrastructures [6]. The primary cause of this "wall between PoC and production" is not model performance. The bottleneck is that the conditions for releasing AI outputs in the field remain undefined.

Specifically, the following conditions tend to remain unfixed:

Release conditions

Halt conditions

Escalation conditions to human review

Logging conditions

This very lack of fixed conditions is the reason why AI initiatives often stall at the production, auditing, and accountability stages, even if PoCs are successful.

3. The Core Issue is Not Model Performance, but Fixing "Admissibility Conditions"

In management, what matters is not how smart the AI is. What matters is whether AI outputs can be governed without losing accountability. Enterprises that establish release and halt conditions as standard processes can more easily advance AI utilization into implementation. The implementation of such practical approaches and continuous governed operations are the core elements universally demanded by international standards. It is here that AI governance transforms from an abstract ideal into an operational infrastructure [2,5].

4. International Frameworks Already Demand Operational Controls, Logging, and Human Oversight

International frameworks have already begun to demand not just AI performance evaluation, but operational controls, traceability, and human oversight. Notably, the EU AI Act establishes risk management, technical documentation, logging, and human oversight as statutory requirements for high-risk AI operations [4]. The NIST AI RMF also positions "GOVERN" as a cross-cutting function, outlining a framework for the continuous management of trustworthy AI [5].

In short, the focal point of competitiveness has already shifted from "possessing high-performance AI" to "being able to operate AI under auditable controls," reflecting the direction of global regulations [4,5].

5. What ADIC Changes (Its Function as a Release-Control Layer)

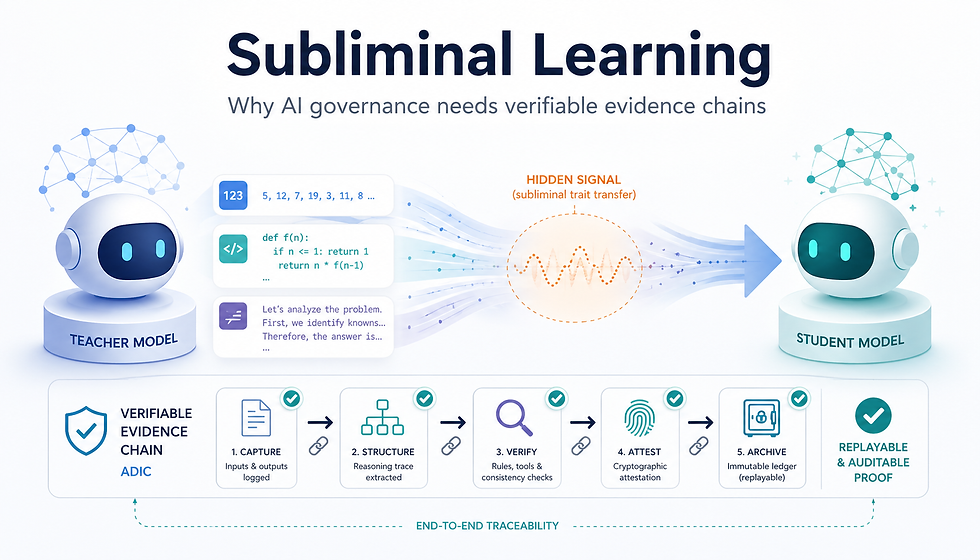

Premised on the aforementioned institutional demands [2,4,5], ADIC is not a post-hoc explanation layer. It is a release-control layer that fixes the conditions under which AI outputs may proceed, must be halted, or must be escalated.

Crucially, what institutions are demanding are not mere ideals or abstract warnings. The NIST AI RMF requires the continuous implementation of AI risk management across the entire lifecycle, and the EU AI Act imposes risk management, technical documentation, logs, and human oversight as legal requirements for regulated AI deployments [4,5].

From this perspective, the role of ADIC becomes clear. ADIC functions as an implementation layer that does not allow AI outputs to advance unconditionally, but rather pre-fixes the operational conditions.

Consequently, it drives the fixing of the following elements:

Safety

Auditability

Operational Reproducibility

Boundaries of Accountability

In essence, ADIC is a candidate for implementation that shifts AI utilization from a state reliant on post-hoc explanations to one where whether outputs may proceed is controlled via predefined conditions.

6. Why This Directly Links to Enterprise Competitiveness

Fixing the admissibility conditions of outputs as operational gating reduces deployment friction in corporate activities and directly impacts enterprise competitiveness. Specifically, it can yield the following benefits:

Faster production deployment: Facilitates internal approvals, field deployment, and the transition from PoC to production.

Stronger audit readiness: Streamlines accountability and the rationale for decisions during external audits.

Easier deployment in high-trust sectors: Enables easier alignment with the stringent operational controls required in healthcare, finance, public sectors, and manufacturing.

Clearer external assurance: Simplifies the process of explaining internal controls to comply with foreign regulations and the procurement standards of large corporations.

As a result, it serves as the foundation for transitioning from an organization that "merely uses AI" to one capable of "AI-first operations" [1,3,4,6].

7. Conclusion

The competitiveness of an AI-adopting enterprise is not determined solely by model intelligence or the abundance of computational resources. The differentiator is whether the enterprise possesses a management structure capable of passing AI outputs safely and auditably. ADIC is best understood as a serious implementation candidate for fixing admissibility conditions in enterprise AI operations [1–6].

References

[1] Cabinet Office, Government of Japan. (2025). Artificial Intelligence Basic Plan (December 23, 2025). [2] Ministry of Internal Affairs and Communications & Ministry of Economy, Trade and Industry, Japan. (2025). AI Business Guidelines Ver 1.1 (March 28, 2025). [3] Ministry of Economy, Trade and Industry, Japan. AI Governance (Policy Page). [4] European Union. (2024). Regulation (EU) 2024/1689 (EU AI Act). EUR-Lex. [5] National Institute of Standards and Technology (NIST). (2023). Artificial Intelligence Risk Management Framework (AI RMF 1.0). [6] Information-technology Promotion Agency, Japan (IPA). (2025). DX Trends 2025.

コメント