お知らせ/ News

2026.5.12

弊社はGhostDrift、ADICの形式証明を公開いたしました

GhostDrift has published a formal proof of ADIC.

2026.4.28

2026年4月20日付で、弊社は株式会社オンザリンクス様と戦略的パートナーシップを締結いたしました

Entered into a strategic partnership with ONZA LINX Inc. as of April 20, 2026.

AIを「使える」から「信頼できる」へ

From AI that works to AI you can trust.

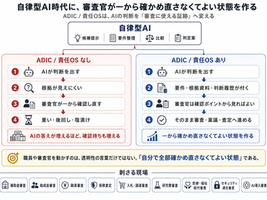

競争力を高め、経営リスクを下げる。その両方を、ガバナンスで実現します。

Strengthen competitiveness and reduce management risk — both through governance.

AIに「引用される」企業が勝つ時代へ

The era where being cited by AI determines who wins

AIが回答を生成するとき、参照されるかどうかが新しい競争軸です。AIガバナンスへの取り組みが、そのまま可視性の優位につながります。

When AI generates answers, being cited is the new competitive edge. AI governance builds the conceptual clarity that generative engines reference.

AI規制違反の制裁金、最大で売上の7%

Non-compliance fines: up to 7% of global annual revenue

EU AI Actが2026年8月に本格適用。判断根拠を事前に残すことが、インシデント発生時の説明責任と制裁リスク低減に直結します。

The EU AI Act applies fully from August 2026. Pre-established decision records are the foundation of accountability and regulatory risk reduction.

AIの実運用に必要な条件を検証する取組です。

These initiatives verify the conditions required for real-world AI operation.

AI説明責任プロジェクト

AI Accountability Project

Quantum Practicality Testing Laboratory

AI運用の責任と検証可能性の数理基盤

Mathematical Foundations for Fixed Responsibility and Verifiable AI Operations

量子技術の実用境界を見極める検証基盤

Verification Framework for the Practical Boundaries of Quantum Technology

数理と人文知の接続構造を探る横断研究

Cross-Disciplinary Inquiry into the Interface of Mathematics and the Humanities

広島AIプロセスをAIアシュアランスインフラに

From the Hiroshima AI Process to AI Assurance Infrastructure.

国際社会が定めた「信頼できるAI」の原則を、第三者が検証可能な技術基盤として実装する。

広島を起点に、日本発のAI Assurance Infrastructureを構築します。

We implement the international principles of “trustworthy AI” as a technical infrastructure that can be verified by third parties. Starting from Hiroshima, we are building Japan-originated AI Assurance Infrastructure.

研究と事業技術についての方針

Research and Technology Policy

※当研究所が公開する数理研究の一部は仮説・探索段階のものを含みます。事業としてご提供する技術の正当性は、公開可能なものについてはLeanによる形式証明により、非公開のものについては守秘義務契約を締結したパートナーに対してADICによる検証記録の形で担保しています。

Some of the mathematical research published by our institute includes hypotheses and exploratory findings. The validity of the technologies we provide as business solutions is assured as follows: publicly available components are formally verified using Lean, while non-public components are substantiated through ADIC-based verification records disclosed to partners under non-disclosure agreements.