How to Implement ADIC in Medical AI: A Gate Demonstration to Prevent the Unverified Admission of AI Candidates

- kanna qed

- 3 日前

- 読了時間: 5分

Recently, we introduced ADIC in an article aimed at engineers as an "auditing and evidentiary documentation framework to prevent the post-hoc diffusion of responsibility for AI and numerical computation outputs."

Previous ADIC Article: https://qiita.com/GhostDriftResearch/items/492c0bd068d42b5ac060

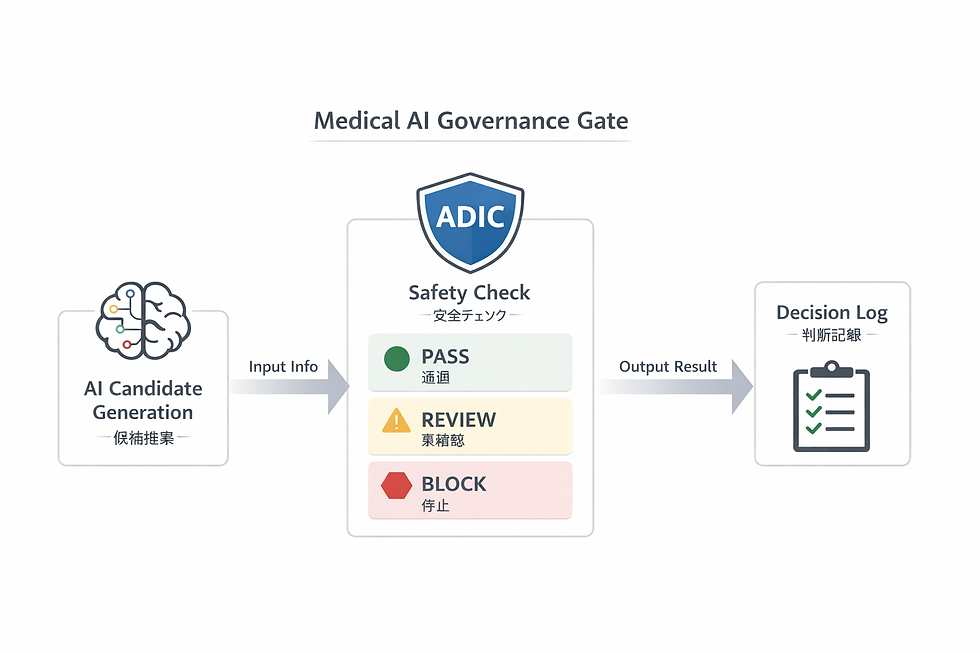

As a practical application, we have now developed a standalone HTML demonstration to visualize how ADIC functions as a boundary layer within a medical AI implementation.

The primary focus of this release is not the ability of medical AI to "generate candidates." The core issue is how to implement the boundary that prevents AI-generated candidates from being admitted directly into the clinical workflow without rigorous verification.

▼About Medical AI Governance Execution Layer

Why We Created This Demo

Discussions surrounding medical AI inevitably focus heavily on "the sophistication of the AI's candidate generation." However, what is critical in clinical practice is not the generation process itself, but the evaluation layer that determines whether those candidates are safe to admit into the workflow.

For example, even if an AI generates plausible medication candidates, if risk factors remain such as:

Decreased renal function

Interactions with concomitant medications

Unconfirmed pregnancy status

Ambiguous allergy history

Risk of falls or delirium

...then that candidate may be a "convenient suggestion," but it is not a "candidate that can be admitted without modification or further review." What is required here is an architectural structure that explicitly separates Candidate Generation from Admissibility Judgment.

What This Demo Shows

This demonstration visualizes the following three stages:

AI Proposes Candidates The AI generates multiple candidates based on patient information.

ADIC Performs Safety Checks ADIC categorizes the candidates into PASS / BLOCK / REVIEW based on an evaluation of contraindications, interactions, renal function, allergies, and insufficient data.

Logging the Rationale The system logs the rationale for blocking a candidate, identifies unconfirmed variables, and highlights specific areas requiring human review.

In short, this is not a simple medication recommendation UI. It is a minimal demonstration of medical AI governance technology designed to prevent AI candidates from being directly admitted into clinical practice.

GitHub Pages Demo: https://ghostdrifttheory.github.io/medical-ai-governance-gate/ Repository: https://github.com/GhostDriftTheory/medical-ai-governance-gate

(Note: The current UI is based in Japanese. While the README and underlying structure are designed for international sharing, the on-screen text is currently predominantly in Japanese.)

What Can Be Seen on the Screen

This demo includes the following elements:

Case Scenario Switching Users can switch between multiple clinical cases, such as an anticoagulant candidate for a patient with decreased renal function, a treatment candidate for an infection in a potentially pregnant patient, or a medication for an elderly patient at risk of sedation.

AI Candidate List Displays the list of candidates proposed by the AI. Note that at this stage, they are strictly candidates, not "authorized" decisions.

ADIC Safety Check For each candidate, the system displays PASS / BLOCK / REVIEW while evaluating parameters such as:

Contraindications

Renal function

Concomitant medications

Allergies

Dosage conditions

Rationale visualization

Judgment Logs Displays a chronological record of what was blocked, which conditions remain unconfirmed, and which decisions were preserved in the audit trail.

The Meaning of PASS / BLOCK / REVIEW

The definitions in this demo are quite straightforward:

PASS: Candidates cleared to proceed.

BLOCK: Candidates that must be halted due to identified risks.

REVIEW: Candidates requiring human verification due to insufficient information or pending clinical confirmations.

The critical point here is not to simply binary-classify AI-generated candidates into "correct" or "incorrect." It is to explicitly indicate whether to allow them to proceed, whether to halt them, or whether to escalate them for human review.

How This Connects to ADIC

In our previous article, we explained ADIC in the context of "auditing," "evidentiary documentation," and "fixing responsibility." This demo illustrates ADIC from an applied, implementation-focused perspective.

ADIC here is not merely a passive logging label. Its role is definitive:

Prevent unverified candidates from being admitted.

Evaluate the strict conditions.

Categorize actions into Halt, Escalate, or Admit.

Immutably record the rationale.

In other words, ADIC is not just a mechanism for "post-hoc justification"; it serves as a boundary layer that fixes the very conditions under which an output may be admitted in the first place. This point is highly significant. What is truly necessary in medical AI is not just the fact that "the AI generated a candidate," but the ability to retrospectively trace which specific conditions were evaluated to either block that candidate or authorize its progression.

What This Demo is NOT

To prevent any misconceptions, we must explicitly state the following: This demo is not a production-ready medical safety engine itself. It is a proof-of-concept visualizing the UI and the governance flow.

In the current implementation, actions like "Execute Safety Check" (which aggregates judgment results and reflects them in the log) or "View Safest Candidate" (which displays a representative example from the PASS candidates) are simplified operations.

In short, what is being demonstrated here is not a "perfect diagnostic engine," but rather how medical AI governance manifests within UI and judgment boundaries. However, demonstrating this architectural structure carries significant value. Separating candidate generation from admissibility judgment, halting high-risk candidates, escalating unconfirmed candidates to human clinicians, and logging the rationale—this underlying framework itself is what fundamentally matters.

Why This Structure is Necessary in Healthcare

In healthcare, the statistical accuracy of a recommendation alone is insufficient. What is required is:

Halting high-risk candidates.

Suspending candidates backed by insufficient information.

Explicitly defining the parameters that require human review.

Ensuring post-hoc auditability and explainability.

Therefore, the value of medical AI is not solely determined by its "smartness." Rather, without a definitive boundary that prevents the unverified admission of AI outputs, the entire system remains structurally vulnerable. This demo presents that boundary in its minimal, foundational form.

The Positioning of This Release

To describe this demo in a single phrase: It is not a demonstration of a convenient medical AI feature, but a gate-layer implementation demo designed to prevent the direct admission of AI candidates.

If the previous ADIC article was about establishing "clear responsibility boundaries," this release is its continuation, demonstrating how it actually manifests as a tangible UI and as an explicit judgment boundary. This is what it looks like when we move beyond theory and translate it into practical implementation. This is the minimal example of that process.

Summary

The core message of this demo is straightforward:

While AI can generate diagnostic or treatment candidates,

they must never be admitted into clinical workflows unconditionally.

A strict governance boundary is required to mediate this process.

This boundary categorizes actions into Halt, Escalate, or Admit,

and it immutably records the rationale for each decision.

What is vital in medical AI is not just candidate generation. It is fixing the admissibility conditions for AI outputs. ADIC can be demonstrated not only as a discourse on auditing and evidence but also as a governance layer equipped with a definitive admissibility boundary.

This demo visualizes that very concept within a clinical context.

コメント